Stack Exchange Network

Stack Exchange network consists of 183 Q&A communities including Stack Overflow , the largest, most trusted online community for developers to learn, share their knowledge, and build their careers.

Q&A for work

Connect and share knowledge within a single location that is structured and easy to search.

What is the fastest algorithm for this assignment problem?

I have a 2x2 grid and I have 5 tokens. I want to place 4 of the 5 tokens on the grid.

Each token has a different value depending on where they are placed on the grid. Essentially if they should not be placed in a certain position they are awarded a value of 20, otherwise they have a score lower than 20.

I am writing a program that needs to figure out which 4 tokens should be placed, in order to use the ones with the lowest value possible.

I need this part of the program to be as fast as possible. I'm wondering if there is an optimal algorithm I should use. I have been researching and came across the Hungarian algorithm but I'm wondering if there is another option I should be considering.

Here is an example of the problem:

My grid has its' positions labelled, a,b,c,d ...

+--------+--------+ | c | d | | | | +--------+--------+ | a | b | | | | +--------+--------+

And I have the following tokens with corresponding values for each location on the grid... a b c d token_p = [20, 20, 15, 20] token_r = [ 1, 1, 20, 20] token_s = [15, 20, 20, 20] token_t = [20, 10, 20, 10] token_u = [20, 20, 5, 20]

The answer should be:

token_s = a (value 15)

token_r = b (value 1)

token_u = c (value 5)

token_t = d (value 10)

I would create a weighted $K_{t, n}$ graph, with tokens in one partition and grid slots on the other, and use a greedy bipartite matching algorithm.

Here is a tutorial on general bipartite matching: http://www.dreamincode.net/forums/topic/304719-data-structures-graph-theory-bipartite-matching/

You will have to adapt it for a greedy approach.

You must log in to answer this question.

Not the answer you're looking for browse other questions tagged algorithms ..

- Featured on Meta

- Upcoming initiatives on Stack Overflow and across the Stack Exchange network...

- Announcing a change to the data-dump process

Hot Network Questions

- The vertiginous question: Why am I me and not someone else?

- Any tips to differentiate zero and non-zero values on a map with a continuous color scale?

- What is the precedence of operators '=', '||'

- Why did Kamala Harris describe her own appearance at the start of an important meeting?

- What is a simpler method to find the analytical expression for the function f[x]?

- If we are drawing red and black cards out of an infinite deck, and we draw red with probability 4/5, what is E(num draws to draw 3 consecutive blacks)

- Do Jim's Magic Missiles strike simultaneously? If not, how is damage resolved?

- Correctly escaping <CR>: how can I map a command to send the literal string "<CR>" to a vim function?

- Does Event Viewer have any sensitive information like password, or such info?

- Civic Responsibility on London Railway regarding others tailgating us

- Who picks up when you call the "phone number for you" following a possible pilot deviation?

- Bike post slips down

- Uniform prior and poisson likelihood, what posterior distribution will be produced?

- What programming language was used in Dijkstra's response to Frank Rubin's article about "Go To Statement Considered Harmful"

- Difference in approach when reviewing papers vs grants

- When given a wide passage such as the left-hand part in bars 20-21 - should my fingering seek to avoid the thumb on black keys?

- From which notable Europa's surface features could be possible to observe Jupiter eclipsing the sun?

- Create DevOps cycle in TikZ

- What category position does a copular-be occupy in a basic English sentence?

- Details of an ALF episode with Ann Meara: Did she threaten ALF?

- What programming language was used in Frank Rubin's letter about Dijkstra's "Go To Statement Considered Harmful"?

- How is Agile model more flexible than the Waterfall model?

- Simple instance illustrating significance of Langlands dual group without getting into the Langlands program?

- GolfScript many-items rotation

- Corpus ID: 9914929

QuickMatch--a very fast algorithm for the assignment problem

- J. Orlin , Yusin Lee

- Published 1993

- Computer Science

Figures from this paper

34 Citations

Algorithm 1015, optimizing bipartite matching in real-world applications by incremental cost computation, a self-adaptive nature-inspired procedure for solving the quadratic assignment problem, continuous spatial assignment of moving users, developing an effective decomposition-based procedure for solving the quadratic assignment problem, on very large scale assignment problems.

- Highly Influenced

Optimal matching between spatial datasets under capacity constraints

Large scale optimization : state of the art, assignment as a location-based service in outsourced databases, seat assignment problem optimization, 14 references, a shortest augmenting path algorithm for the semi-assignment problem, faster scaling algorithms for network problems, solving the assignment problem by relaxation, a distributed asynchronous relaxation algorithm for the assignment problem, a labeling algorithm to solve the assignment problem, technical note - a polynomial simplex method for the assignment problem, the auction algorithm for assignment and other network flow problems: a tutorial, signature methods for the assignment problem, a systolic array solution for the assignment problem, network flows: theory, algorithms, and applications, related papers.

Showing 1 through 3 of 0 Related Papers

- DSpace@MIT Home

- Sloan School of Management

- Sloan Working Papers

QuickMatch--a very fast algorithm for the assignment problem

Description

Date issued, other identifiers, series/report no., collections.

Show Statistical Information

- Data Structures

- Linked List

- Binary Tree

- Binary Search Tree

- Segment Tree

- Disjoint Set Union

- Fenwick Tree

- Red-Black Tree

- Advanced Data Structures

Hungarian Algorithm for Assignment Problem | Set 1 (Introduction)

- For each row of the matrix, find the smallest element and subtract it from every element in its row.

- Do the same (as step 1) for all columns.

- Cover all zeros in the matrix using minimum number of horizontal and vertical lines.

- Test for Optimality: If the minimum number of covering lines is n, an optimal assignment is possible and we are finished. Else if lines are lesser than n, we haven’t found the optimal assignment, and must proceed to step 5.

- Determine the smallest entry not covered by any line. Subtract this entry from each uncovered row, and then add it to each covered column. Return to step 3.

Try it before moving to see the solution

Explanation for above simple example:

An example that doesn’t lead to optimal value in first attempt: In the above example, the first check for optimality did give us solution. What if we the number covering lines is less than n.

Time complexity : O(n^3), where n is the number of workers and jobs. This is because the algorithm implements the Hungarian algorithm, which is known to have a time complexity of O(n^3).

Space complexity : O(n^2), where n is the number of workers and jobs. This is because the algorithm uses a 2D cost matrix of size n x n to store the costs of assigning each worker to a job, and additional arrays of size n to store the labels, matches, and auxiliary information needed for the algorithm.

In the next post, we will be discussing implementation of the above algorithm. The implementation requires more steps as we need to find minimum number of lines to cover all 0’s using a program. References: http://www.math.harvard.edu/archive/20_spring_05/handouts/assignment_overheads.pdf https://www.youtube.com/watch?v=dQDZNHwuuOY

Please Login to comment...

Similar reads.

- Mathematical

Improve your Coding Skills with Practice

What kind of Experience do you want to share?

- MATLAB Answers

- File Exchange

- AI Chat Playground

- Discussions

- Communities

- Treasure Hunt

- Community Advisors

- Virtual Badges

- MathWorks.com

- Trial software

You are now following this Submission

- You may receive emails, depending on your communication preferences

Fast Linear Assignment Problem using Auction Algorithm (mex)

View License

- Open in MATLAB Online

- Version History

- Reviews (13)

- Discussions (13)

Mex implementation of Bertsekas' auction algorithm [1] for a very fast solution of the linear assignment problem. The implementation is optimised for sparse matrices where an element A(i,j) = 0 indicates that the pair (i,j) is not possible as assignment. Solving a sparse problem of size 950,000 by 950,000 with around 40,000,000 non-zero elements takes less than 8 mins. The method is also efficient for dense matrices, e.g. it can solve a 20,000 by 20,000 problem in less than 3.5 mins. Both, the auction algorithm and the Kuhn-Munkres algorithm have worst-case time complexity of (roughly) O(N^3). However, the average-case time complexity of the auction algorithm is much better. Thus, in practice, with respect to running time, the auction algorithm outperforms the Kuhn-Munkres (or Hungarian) algorithm significantly. When using this implementation in your work, in addition to [1], please cite our paper [2].

[1] Bertsekas, D.P. 1998. Network Optimization: Continuous and Discrete Models. Athena Scientific. [2] Bernard, F., Vlassis, N., Gemmar, P., Husch, A., Thunberg, J., Goncalves, J. and Hertel, F. 2016. Fast correspondences for statistical shape models of brain structures. SPIE Medical Imaging, San Diego, CA, 2016.

The author would like to thank Guangning Tan for helpful feedback. If you want to use the Auction algorithm without Matlab, please check out Guangning Tan's C++ interface, available here: https://github.com/tgn3000/fastAuction .

Florian Bernard (2024). Fast Linear Assignment Problem using Auction Algorithm (mex) (https://www.mathworks.com/matlabcentral/fileexchange/48448-fast-linear-assignment-problem-using-auction-algorithm-mex), MATLAB Central File Exchange. Retrieved July 24, 2024 .

MATLAB Release Compatibility

Platform compatibility.

- MATLAB > Mathematics > Graph and Network Algorithms > Construction >

Tags Add Tags

Community treasure hunt.

Find the treasures in MATLAB Central and discover how the community can help you!

Discover Live Editor

Create scripts with code, output, and formatted text in a single executable document.

Learn About Live Editor

fastAuction_v2.6/

- sparseAssignmentProblemAuctionAlgorithm(A, epsilon, epsilonDecreaseFactor, verbosity, doFeasibilityCheck)

| Version | Published | Release Notes | |

|---|---|---|---|

| 2.6.0.0 | - use of dmperm() to perform fast feasibility check | ||

| 2.4.0.0 | - improved performance for very large sparse matrices | ||

| 2.2.0.0 | bugfix (concerned benefit matrices where for some of the rows exactly one assignment is allowed, thanks to Gary Guangning Tan for pointing out this problem) | ||

| 2.1.0.0 | bugfix related to the epsilon heuristic (2) | ||

| 2.0.0.0 | updated description | ||

| 1.2.0.0 | . | ||

| 1.1.0.0 | . | ||

| 1.0.0.0 |

Select a Web Site

Choose a web site to get translated content where available and see local events and offers. Based on your location, we recommend that you select: .

You can also select a web site from the following list

How to Get Best Site Performance

Select the China site (in Chinese or English) for best site performance. Other MathWorks country sites are not optimized for visits from your location.

- América Latina (Español)

- Canada (English)

- United States (English)

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- United Kingdom (English)

Asia Pacific

- Australia (English)

- India (English)

- New Zealand (English)

- 简体中文 Chinese

- 日本 Japanese (日本語)

- 한국 Korean (한국어)

Contact your local office

Stack Exchange Network

Stack Exchange network consists of 183 Q&A communities including Stack Overflow , the largest, most trusted online community for developers to learn, share their knowledge, and build their careers.

Q&A for work

Connect and share knowledge within a single location that is structured and easy to search.

Difference between solving Assignment Problem using the Hungarian Method vs. LP

When trying to solve for assignments given a cost matrix, what is the difference between

using Scipy's linear_sum_assignment function (which I think uses the Hungarian method)

describing the LP problem using a objective function with many boolean variables, add in the appropriate constraints and send it to a solver, such as through scipy.optimize.linprog ?

Is the later method slower than Hungarian method's O(N^3) but allows for more constraints to be added?

- linear-programming

- combinatorial-optimization

- assignment-problem

- $\begingroup$ The main difference between a mathematical model and a heuristic algorithm to solve a specific problem is more likely to prove optimality rather feasibility. Now, one can decide which one to be selected in order to satisfy needs. $\endgroup$ – A.Omidi Commented Mar 20, 2022 at 7:54

- 4 $\begingroup$ @A.Omidi the Hungarian method is an exact algorithm $\endgroup$ – fontanf Commented Mar 20, 2022 at 8:26

- $\begingroup$ @fontanf, you are right. What I said was to compare the exact and heuristic methods and it is not specific to Hungarian alg. Thanks for your hint. $\endgroup$ – A.Omidi Commented Mar 20, 2022 at 10:32

The main differences probably are that there is a somewhat large overhead you have to pay when solving the AP as a linear program: You have to build an LP model and ship it to a solver. In addition, an LP solver is a generalist. It solves all LP problems and focus in development is to be fast on average on all LPs and also to be fast-ish in the pathological cases.

When using the Hungarian method, you do not build a model, you just pass the cost matrix to a tailored algorithm. You will then use an algorithm developed for that specific problem to solve it. Hence, it will most likely solve it faster since it is a specialist.

So if you want to solve an AP you should probably use the tailored algorithm. If you plan on extending your model to handle other more general constraints as well, you might need the LP after all.

Edit: From a simple test in Python, my assumption is confirmed in this specific setup (which is to the advantage of the Hungarian method, I believe). The set up is as follows:

- A size is chosen in $n\in \{5,10,\dots,500\}$

- A cost matrix is generated. Each coefficient $c_{ij}$ is generated as a uniformly distributed integer in the range $[250,999]$ .

- The instance is solved using both linear_sum_assignment and as a linear program. The solution time is measured as wall clock time and only the time spent by linear_sum_assignment and the solve function is timed (not building the LP and not not generating the instance)

For each size, I have generated and solved ten instances, and I report the average time only.

And then there is of course the "but". I am not a ninja in Python and I have used pyomo for modelling the LPs. I believe that pyomo is known to be slow-ish whenbuilding models, hence I have only timed the solver.solve(model) part of the code - not building the model. There is however possibly a hugh overhead cost coming from pyomo translating the model to "gurobian" (I use gurobi as solver).

- 1 $\begingroup$ Do you have some benchmark results to support this claim? Intuitively, I would have thought that the Hungarian method would be much slower in practice $\endgroup$ – fontanf Commented Mar 20, 2022 at 7:39

- $\begingroup$ @fontanf I only have anecdotal proof from past experiments. Maybe an LP solver can work faster for repeated solves, where you exploit that the model is already build and basis info is available. But I honestly don't know. $\endgroup$ – Sune Commented Mar 20, 2022 at 9:37

- 1 $\begingroup$ It might be the case that the Hungarian method is faster for small problems (due to the overhead Sune mentioned for setting up an LP model) while simplex (or dual simplex, or maybe barrier) might be faster for large models because the setup cost is "amortized" better. (I'm just speculating here.) $\endgroup$ – prubin ♦ Commented Mar 20, 2022 at 15:30

- 2 $\begingroup$ The Hungarian algorithm is, of course, O(n^3) for fully dense assignment problems. I don't know if there is a simplex bound explicitly for assignments. Simplex is exponential in the worst case and linear in variables plus constraints (n^2 + 2n here) in practice. But assignments are highly degenerate (n positive basics out of 2n rows). Dual simplex may fare better than primal. Hungarian is all integer for integer costs, whereas a standard simplex code won't be unless it knows to detect that in preprocessing. That may lead to some overhead for linear algebra. Ha, an idea for a class project! $\endgroup$ – mjsaltzman Commented Mar 20, 2022 at 17:04

- 2 $\begingroup$ Just for the sake of completeness, here 's the same with gurobipy instead of Pyomo. On my machine, all LPs (n = 500) are solved in less than a second compared to roughly 4 seconds with Pyomo. $\endgroup$ – joni Commented Mar 21, 2022 at 16:21

Your Answer

Sign up or log in, post as a guest.

Required, but never shown

By clicking “Post Your Answer”, you agree to our terms of service and acknowledge you have read our privacy policy .

Not the answer you're looking for? Browse other questions tagged linear-programming combinatorial-optimization assignment-problem or ask your own question .

- Featured on Meta

- Announcing a change to the data-dump process

- Upcoming initiatives on Stack Overflow and across the Stack Exchange network...

Hot Network Questions

- Could an investor sue the CEO or company for not delivering on promised technological breakthroughs?

- How is Agile model more flexible than the Waterfall model?

- Limited list of words for a text or glyph-based messaging system

- Book about a spaceship that crashed under the surface of a planet

- Optimizing rear derailleur cage length

- What programming language was used in Frank Rubin's letter about Dijkstra's "Go To Statement Considered Harmful"?

- Why do some license agreements ask for the signee's date of birth?

- How can I prevent my fountain's water from turning green?

- Double dequer sort

- Difference in approach when reviewing papers vs grants

- Why did Kamala Harris describe her own appearance at the start of an important meeting?

- Why doesn't Cobb want to shoot Mal when she is about to kill Fischer?

- Create DevOps cycle in TikZ

- Is there a Chinese word for "self"?

- Do Jim's Magic Missiles strike simultaneously? If not, how is damage resolved?

- What is the Isomorphism subspace of the mapping space in an infinity category

- Is there any reason why a hydraulic system would not be suitable for shifting? (Was: Have any been developed?)

- Incorrect affiliation on publication

- Informal definition of groups in Nathan Carter's "Visual Group Theory"

- Would it be possible to generate data from real data in medical research?

- Who were the oldest US Presidential nominees?

- Uniqueness results that follow from CH

- Three kilometers (~2 miles high) tsunamis go around the Earth - what kinds of ruins are left?

- What are the safe assumptions I can make when installing a wall oven without model/serial?

Navigation Menu

Search code, repositories, users, issues, pull requests..., provide feedback.

We read every piece of feedback, and take your input very seriously.

Saved searches

Use saved searches to filter your results more quickly.

To see all available qualifiers, see our documentation .

- Notifications You must be signed in to change notification settings

Algorithm for approximately solving quadratic assignment problems.

sogartar/faqap

Folders and files.

| Name | Name | |||

|---|---|---|---|---|

| 20 Commits | ||||

Repository files navigation

Fast approximate quadratic assignment problem solver.

This is a Python implementation of an algorithm for approximately solving quadratic assignment problems described in

Joshua T. Vogelstein and John M. Conroy and Vince Lyzinski and Louis J. Podrazik and Steven G. Kratzer and Eric T. Harley and Donniell E. Fishkind and R. Jacob Vogelstein and Carey E. Priebe (2012) Fast Approximate Quadratic Programming for Large (Brain) Graph Matching. arXiv:1112.5507 .

min 𝑃∈𝒫 <𝐹, 𝑃𝐷𝑃 𝖳 >

where 𝐷, 𝐹 ∈ ℝ 𝑛×𝑛 , 𝒫 is the set of 𝑛×𝑛 permutation matrices and <., .> denotes the Frobenius inner product.

The implementation employs the Frank–Wolfe algorithm .

GPU Support

GPU support is enabled through Torch. It is an optional dependency. In order to use the GPU you must pass Torch tensors that are on the CUDA device. If you pass CPU tensors the GPU will not be used.

Note that linear sum assignment, which is a part of the algorithm, is done on the CPU though SciPy. On a system with GPU GeForce RTX 2080 SUPER and CPU AMD Ryzen Threadripper 2920X (single thread at 3.5 - 4.3 GHz) for a float32, 128 sized problem linear sum assignment takes ~60% of the execution time. It may be possible to move that part on the GPU as well, but currently there are no good off-the-shelf GPU implementations for that. It is also unclear if there will be any significant speedup.

Installation

Dependencies.

- Python (>=3.5)

- NumPy (>=1.10)

- SciPy (>=1.4)

- Torch (optional)

- Python 100.0%

- Stack Overflow for Teams Where developers & technologists share private knowledge with coworkers

- Advertising & Talent Reach devs & technologists worldwide about your product, service or employer brand

- OverflowAI GenAI features for Teams

- OverflowAPI Train & fine-tune LLMs

- Labs The future of collective knowledge sharing

- About the company Visit the blog

Collectives™ on Stack Overflow

Find centralized, trusted content and collaborate around the technologies you use most.

Q&A for work

Connect and share knowledge within a single location that is structured and easy to search.

Get early access and see previews of new features.

How to tractably solve the assignment optimisation task

I'm working on a script that takes the elements from companies and pairs them up with the elements of people . The goal is to optimize the pairings such that the sum of all pair values is maximized (the value of each individual pairing is precomputed and stored in the dictionary ctrPairs ).

They're all paired in a 1:1, each company has only one person and each person belongs to only one company, and the number of companies is equal to the number of people. I used a top-down approach with a memoization table ( memDict ) to avoid recomputing areas that have already been solved.

I believe that I could vastly improve the speed of what's going on here but I'm not really sure how. Areas I'm worried about are marked with #slow? , any advice would be appreciated (the script works for inputs of lists n<15 but it gets incredibly slow for n > ~15)

- optimization

- dynamic-programming

- Sorry I guess that was confusing. I think it follows the model of a knapsack type problem, so the idea is to come up with a solution that has every element of companies paired with an element of people where the sum of all their pairings is the maximum possible value for all potential combinations (i.e. there would be no other arrangement of pairs that could yield a higher sum) – spencewah Commented Jun 11, 2009 at 16:35

- 1 @SilentGhost Stop setting a title that actually describes the question? – Marcin Commented Nov 1, 2012 at 15:36

- 1 @Marcin: stop messing about w/ privileges and go answer some questions. – SilentGhost Commented Nov 1, 2012 at 15:37

- @SilentGhost What do you mean, "Messing about with privileges"? What is your objection to the title I just set? – Marcin Commented Nov 1, 2012 at 15:39

4 Answers 4

To all those who wonder about the use of learning theory, this question is a good illustration. The right question is not about a "fast way to bounce between lists and tuples in python" — the reason for the slowness is something deeper.

What you're trying to solve here is known as the assignment problem : given two lists of n elements each and n×n values (the value of each pair), how to assign them so that the total "value" is maximized (or equivalently, minimized). There are several algorithms for this, such as the Hungarian algorithm ( Python implementation ), or you could solve it using more general min-cost flow algorithms, or even cast it as a linear program and use an LP solver. Most of these would have a running time of O(n 3 ).

What your algorithm above does is to try each possible way of pairing them. (The memoisation only helps to avoid recomputing answers for pairs of subsets, but you're still looking at all pairs of subsets.) This approach is at least Ω(n 2 2 2n ). For n=16, n 3 is 4096 and n 2 2 2n is 1099511627776. There are constant factors in each algorithm of course, but see the difference? :-) (The approach in the question is still better than the naive O(n!), which would be much worse.) Use one of the O(n^3) algorithms, and I predict it should run in time for up to n=10000 or so, instead of just up to n=15.

"Premature optimization is the root of all evil", as Knuth said, but so is delayed/overdue optimization: you should first carefully consider an appropriate algorithm before implementing it, not pick a bad one and then wonder what parts of it are slow. :-) Even badly implementing a good algorithm in Python would be orders of magnitude faster than fixing all the "slow?" parts of the code above (e.g., by rewriting in C).

- 1 NB: The original title of the question was "Help requested: what's a fast way to bounce between lists and tuples in python?", hence the first paragraph of this answer. – Marcin Commented Feb 9, 2012 at 17:39

i see two issues here:

efficiency: you're recreating the same remainingPeople sublists for each company. it would be better to create all the remainingPeople and all the remainingCompanies once and then do all the combinations.

memoization: you're using tuples instead of lists to use them as dict keys for memoization; but tuple identity is order-sensitive. IOW: (1,2) != (2,1) you better use set s and frozenset s for this: frozenset((1,2)) == frozenset((2,1))

remainingCompanies = companies[1:len(companies)]

Can be replaced with this line:

For a very slight speed increase. That's the only improvement I see.

If you want to get a copy of a tuple as a list you can do mylist = list(mytuple)

Your Answer

Reminder: Answers generated by artificial intelligence tools are not allowed on Stack Overflow. Learn more

Sign up or log in

Post as a guest.

Required, but never shown

By clicking “Post Your Answer”, you agree to our terms of service and acknowledge you have read our privacy policy .

Not the answer you're looking for? Browse other questions tagged python algorithm optimization dynamic-programming or ask your own question .

- Featured on Meta

- Upcoming initiatives on Stack Overflow and across the Stack Exchange network...

- Announcing a change to the data-dump process

Hot Network Questions

- Would it be possible to generate data from real data in medical research?

- Details of an ALF episode with Ann Meara: Did she threaten ALF?

- From which notable Europa's surface features could be possible to observe Jupiter eclipsing the sun?

- Take screenshot of rendered HTML from Linux without GUI

- Informal definition of groups in Nathan Carter's "Visual Group Theory"

- Does a constructor parameter of a nested class shadow members of the enclosing class?

- Why is the future perfect used in "This latest setback will have done nothing to quell the growing doubts about the future of the club."?

- When given a wide passage such as the left-hand part in bars 20-21 - should my fingering seek to avoid the thumb on black keys?

- Does Event Viewer have any sensitive information like password, or such info?

- Incorrect affiliation on publication

- Simple instance illustrating significance of Langlands dual group without getting into the Langlands program?

- How can I prevent my fountain's water from turning green?

- QGIS - Retrieve the most recent value from the child table

- What is this huge mosquito looking insect?

- How to name uppercase variables when using the camelCase convention?

- Three kilometers (~2 miles high) tsunamis go around the Earth - what kinds of ruins are left?

- A hat puzzle question—how to prove the standard solution is optimal?

- Different between Curly braces{} and brackets[] while initializing array in C#?

- Can trusted timestamping be faked by altering some bytes within the document?

- How do you cite an entire magazine/periodical?

- Mathematical proof that viscous damping always diminishes energy

- What programming language was used in Frank Rubin's letter about Dijkstra's "Go To Statement Considered Harmful"?

- How can I extract the individual exposures of an Fujifilm X-T4 raw file that was shot in HDR mode

- Actual Prayaschitta for non-virgin people [Serious]

Stack Exchange Network

Stack Exchange network consists of 183 Q&A communities including Stack Overflow , the largest, most trusted online community for developers to learn, share their knowledge, and build their careers.

Q&A for work

Connect and share knowledge within a single location that is structured and easy to search.

Algorithm for a list of best solutions to the Assignment problem

I am trying to brute force a classical substitution cipher. The problem is that there are $26!$ possible keys. So, I'd like to do frequency analysis to try likely keys first. Then, on the first $n$ tries I will use a dictionary to see if there are actual words in the decryption. That way, I don't need to try all possible keys.

So, as suggested here , I will use chi-squared testing. I was thinking of making a matrix, best illustrated with an example. Suppose in an alphabet {A,B,C} the letter frequencies are 50%, 30% and 20% respectively, and that in a ciphertext the frequencies are 10%, 50% and 40% respectively. Then the A-B cell in the matrix is $(0.1-0.3)^2=0.04$, which is the error rate when a B in plain is encrypted as an A.

The Hungarian algorithm then gives me a frequency-analysis-wise optimal key: $A \mapsto C, B \mapsto A, C \mapsto B$. This is because the sum of the errors (0.01+0.00+0.01=0.02) is minimal. This is useful, but what I need is the $n$ best keys.

The only thing I can come up with is to run the Hungarian algorithm, and then set one of the cells in the matrix above corresponding to the key found to a high value, so that if you run the algorithm again the key you find doesn't contain the mapping. So, in the example above, you could set A-C to 1 so that when you run the Hungarian algorithm again you find a different key that doesn't contain $A\mapsto C$.

However, this isn't guaranteed to find good keys in order. What would be a better way to extend the Hungarian algorithm to find the best $n$ keys?

Epilogue : after implementing this using D.W.'s approach below , it turned out that this method doesn't perform well enough to crack small-length (at least up to 1000 letters) cipher texts, because frequency analysis alone isn't enough. Performance may be improved by taking frequent digrams or trigrams into account, but I doubt this method can be as powerful as simple hill climbing .

- assignment-problem

- 1 $\begingroup$ 1. If you'd like to know about better methods to crack classical substitution ciphers, I encourage you to ask a new question. There are some very cool techniques out there, but they utilize very different methods. 2. The question about how to enumerate the top-$n$ solutions to the assignment problem is still interesting in its own right. I'm trying to figure out if there's a way to reduce it to the problem of finding the $n$ shortest paths in a suitable graph, but I can't quite figure out how to make this idea work. $\endgroup$ – D.W. ♦ Commented Jan 4, 2016 at 20:48

Here's one technique to enumerate the best $n$ assignments, for any instance of the assignment problem. I suspect my approach isn't optimal, but it does run in polynomial time: it uses $O(nm)$ invocations of the Hungarian algorithm, where $m$ denotes the number of agents in the problem instance. In your example, $m=26$, so my approach requires $O(n)$ invocations of the Hungarian algorithm.

Let $A_1,A_2,A_3,\dots$ denote the assignments, from best to worse. $A_1$ is the best assignment; $A_2$ is the next-best; and so on. Our goal want to enumerate $A_1,\dots,A_n$.

You can find $A_1$ by solving the original assignment problem, e.g., with the Hungarian algorithm.

How can we find $A_2$, the second-best assignment? The idea is to use a case analysis. Let $v_1,\dots,v_m$ denote the $m$ agents in the problem instance, and let $A(v)$ denote the task assigned to agent $v$ by assignment $A$. We'll break down the space $\mathcal{S}$ of possible candidates for $A_2$ (i.e., the space of all assignments other than $A_1$) into the disjoint union $\mathcal{S} = \mathcal{S}_1 \cup \dots \cup \mathcal{S}_m$, where $\mathcal{S}_i$ is the space of assignments that agree with $A_1$ for $v_1,\dots,v_{i-1}$ but disagree with $A_1$ on $v_i$. (In other words, we look at the first agent that receives a different assignment in $A_1$ vs $A_2$. Then there are $m$ possibilities for that agent; we let $i$ denote its index, i.e., the index of the first agent whose assignment in $A_1$ is different from its assignment in $A_2$. This breaks down the space $\mathcal{S}$ into subspaces $\mathcal{S}_1,\dots, \mathcal{S}_m$, as listed before.)

Now the approach will be to find the best assignment in each $\mathcal{S}_i$, separately.

$\mathcal{S}_1$: We find the best assignment $A$ such that $A(v_1) \ne A_1(v_1)$ using one invocation of the Hungarian algorithm, by changing the cost of the edge $(v_1,A_1(v_1))$ to $\infty$ (or some very large positive number) and then re-running the Hungarian algorithm. This finds the best assignment out of all assignments that assign $v_1$ to something different than $A_1$ did.

$\mathcal{S}_2$: We find the best assignment $A$ such that $A(v_1) = A_1(v_1)$ and $A(v_2) \ne A_1(v_2)$ using one invocation of the Hungarian algorithm: change the cost of the edge $(v_1,A_1(v_1))$ to $0$, and change the cost of the edge $(v_2,A_1(v_2))$ to $\infty$.

$\mathcal{S}_i$: Similarly, for each $i$, we can find the best assignment $A$ such that $A(v_j) = A_1(v_j)$ for all $j=1,2,\dots,i-1$ and such that $A(v_i) \ne A_1(v_i)$, using one invocation of the Hungarian algorithm.

This gives us $m$ assignments, i.e., $m$ candidates for $A_2$. By construction, each one of these assignments is different from $A_1$. Also, by construction, this covers all the space of all assignments that are different from $A_1$. Therefore, $A_2$ will be the best of these $m$ candidates, so we can just compare these $m$ candidates and call it $A_2$.

That find the second-best assignment. How can we find $A_3$, the third-best assignment? Well, the same ideas apply: we'll use a case split, but now the case-split will be a little more involved. Suppose that $v_i$ is the first agent where $A_1$ and $A_2$ disagree (i.e., $A_1$ and $A_2$ agree on $v_1,\dots,v_{i-1}$ but disagree on $v_i$, so that $A_2 \in \mathcal{S}_i$). Then we can break down the space of possibilities for $A_3$ by looking at the first agent that receives a different assignment from $A_2$, or from $A_1$.

In particular, let $\mathcal{T}$ denote the space of possible candidates for $A_3$ (i.e., the space of all assignments other than $A_1$ or $A_2$). We can partition it into the disjoint union

$$\mathcal{T} = \mathcal{S}_1 \cup \dots \cup \mathcal{S}_{i-1} \cup (\mathcal{T}_1 \cup \dots \cup \mathcal{T}_m) \cup \mathcal{S}_{i+1} \cup \dots \cup \mathcal{S}_m.$$

In other words, since $A_2 \in S_i$ and we now want to exclude $A_2$ from the space of allowable assignments, we partition $S_i$ into $S_i = \{A_2\} \cup \mathcal{T}_1 \cup \dots \cup \mathcal{T}_m$ and remove $A_2$. Here $\mathcal{T}_j$ denotes the set of assignments that agree with $A_2$ on $v_1,\dots,v_{j-1}$ but disagrees with $A_2$ on $v_j$ (and, if $j=i$, disagrees with $A_1$ on $v_j$ as well).

Now, we use the Hungarian algorithm to find the best assignment in each of $\mathcal{S}_1, \dots, \mathcal{S}_{i-1}, \mathcal{T}_1, \dots, \mathcal{T}_m, \mathcal{S}_{i+1}, \dots, \mathcal{S}_m$. This is doable using the techniques shown above, using one invocation of the Hungarian algorithm per subspace. Finally, we let $A_3$ be best of all the solutions found.

We can continue in this way, at each step identifying the next-best by decomposing the space of remaining assignments into multiple subspaces and invoking the Hungarian algorithm on each subspace. At each step, we introduce at most $m$ new subspaces, and we can reuse the previously-obtained results for the other subspaces. Therefore, on each step we make at most $m$ invocations of the Hungarian algorithm, so the total number of invocations of the Hungarian algorithm is $O(nm)$.

There's probably a better way to do it, but if you can't find any other algorithm, this is one you could use. Note that this is a general technique for problem of enumerating the $n$ best assignments to any instance of the assignment problem. It's not specific to your substitution-cipher example.

- $\begingroup$ Here, why do we need to assume that some of the matched edges (in the best assignment) remain intact in the new nodes that you create ? Instead, can we not approach like this : Only discard each existing matched edge from the best assignment one at a time and then compute the best assignment in the modified setting. This gives a set of candidates for the next best assignment. Among this set, as usual the assignment having the minimium cost would be the desired result, i.e. the next best assignment. Thank you. $\endgroup$ – akhil Commented May 21, 2023 at 13:40

- $\begingroup$ @akhil, that sounds like it would work, too. I think both approaches work. $\endgroup$ – D.W. ♦ Commented May 21, 2023 at 20:38

Your Answer

Sign up or log in, post as a guest.

Required, but never shown

By clicking “Post Your Answer”, you agree to our terms of service and acknowledge you have read our privacy policy .

- Featured on Meta

- Announcing a change to the data-dump process

- Upcoming initiatives on Stack Overflow and across the Stack Exchange network...

Hot Network Questions

- How/Why is 皆 (みんな) used as an adverb?

- If we choose a line segment at random, then what is the expected number of paths that pass through it?

- Does Event Viewer have any sensitive information like password, or such info?

- Does a constructor parameter of a nested class shadow members of the enclosing class?

- A hat puzzle question—how to prove the standard solution is optimal?

- What is the precedence of operators '=', '||'

- Actual Prayaschitta for non-virgin people [Serious]

- Bike post slips down

- Why do three sites (SJ, VY, Norrtåg) offer three different prices for the same train connection?

- What category position does a copular-be occupy in a basic English sentence?

- Civic Responsibility on London Railway regarding others tailgating us

- Does a homeomorphism between Sierpinski space powers imply equality of exponents?

- Substring of Fizzbuzz

- Is there any reason why a hydraulic system would not be suitable for shifting? (Was: Have any been developed?)

- Why am I missing the LSP mappings gra, grn, grr and CTRL-S that neovim LSP is supposed to create automatically?

- Simulate Text Cursor

- Is there anyway a layperson can discern what is true news vs fake news?

- Solid border Yin-Yang

- What are the safe assumptions I can make when installing a wall oven without model/serial?

- Correctly escaping <CR>: how can I map a command to send the literal string "<CR>" to a vim function?

- Can a "read-only" µSD card be salvaged?

- Why do some license agreements ask for the signee's date of birth?

- Can true ever be false?

- What is the Isomorphism subspace of the mapping space in an infinity category

Chaos quantum bee colony algorithm for constrained complicate optimization problems and application of robot gripper

- Optimization

- Published: 23 July 2024

Cite this article

- Ruizi Ma ORCID: orcid.org/0000-0002-0645-7947 1 , 2 , 3 ,

- Junbao Gui 1 ,

- Jun Wen 1 &

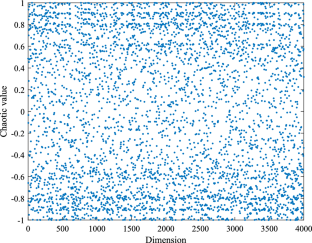

Global constrained optimization problems are very complex for engineering applications. To solve complicated and constrained optimization problems with fast convergence and accurate computations, a new quantum artificial bee colony algorithm using Sin chaos and Cauchy factor (SCQABC) is proposed. The algorithm introduces a quantum bit to initialize the population, which is then updated by the quantum rotation gate to enhance the convergence of the artificial bee colony algorithm (ABC). Sin chaos is introduced to process the individual positions, which improves the randomness and ergodicity of initializing individuals and results in a more diverse initial population. To overcome the upper limits of visiting target individual positions, Cauchy factor is used to mutate individuals for solving falling into local optimum problems. To evaluate the performance of the SCQABC, 20 classical benchmark functions and CEC-2017 are used. The practical engineering problems are also used to verify the practicability of SCQABC algorithm. Moreover, the experimental results will be compared with other well-known and progressive algorithms. According to the results, the SCQABC improves by 64.93% compared with the ABC and also has corresponding improvement compared with other algorithms. Its successful application to the robot gripper problem highlights its effectiveness in solving constrained optimization problems.

This is a preview of subscription content, log in via an institution to check access.

Access this article

Subscribe and save.

- Get 10 units per month

- Download Article/Chapter or Ebook

- 1 Unit = 1 Article or 1 Chapter

- Cancel anytime

Price includes VAT (Russian Federation)

Instant access to the full article PDF.

Rent this article via DeepDyve

Institutional subscriptions

Data Availibility Statement

All data generated or analyzed during this study are included in this article and can be obtained by contacting the corresponding author.

Aboutorabi SSJ, Rezvani MH (2020) An optimized meta-heuristic bees algorithm for players’ frame rate allocation problem in cloud gaming environments. Comput Games J 9(3):281–304. https://doi.org/10.1007/s40869-020-00106-4

Article Google Scholar

Abualigah L, Elaziz MA, Sumari P, Geem ZW, Gandomi AH (2022) Reptile search algorithm (rsa): a nature-inspired meta-heuristic optimizer. Expert Syst Appl 191:1–33. https://doi.org/10.1016/j.eswa.2021.116158

Ahmadi B, Younesi S, Ceylan O, Ozdemir A (2022) An advanced grey wolf optimization algorithm and its application to planning problem in smart grids. Soft Comput 26(8):3789–3808. https://doi.org/10.1007/s00500-022-06767-9

Amiri NM, Sadaghiani F (2020) A superlinearly convergent nonmonotone quasi-newton method for unconstrained multiobjective optimization. Optim Methods Softw 35(6):1223–1247. https://doi.org/10.1080/10556788.2020.1737691

Article MathSciNet Google Scholar

Awad NH, Ali MZ, Mallipeddi R, Suganthan PN (2024) An improved differential evolution algorithm using efficient adapted surrogate model for numerical optimization. Inf Sci 451:326–347

MathSciNet Google Scholar

Bojan-Dragos CA, Precup RE, Preitl S, Roman RC, Hedrea EL, Szedlak-Stinean AI (2021) Gwo-based optimal tuning of type-1 and type-2 fuzzy controllers for electromagnetic actuated clutch systems. IFAC 54(4):189–194. https://doi.org/10.1016/j.ifacol.2021.10.032

Boos DD, Duan S (2021) Pairwise comparisons using ranks in the one-way model. Am Stat 75(4):414–423. https://doi.org/10.1080/00031305.2020.1860819

Carrasco J, Garcia S, Rueda MM, Das S, Herrera F (2020) Recent trends in the use of statistical tests for comparing swarm and evolutionary computing algorithms: practical guidelines and a critical review. Swarm Evol Comput 54:1–20. https://doi.org/10.1016/j.swevo.2020.100665

Choi TJ, Togelius J, Cheong YG (2020) Advanced cauchy mutation for differential evolution in numerical optimization. IEEE Access 8:8720–8734. https://doi.org/10.1109/access.2020.2964222

Ganesan V, Sobhana M, Anuradha G, Yellamma P, Devi OR, Prakash KB, Naren J (2021) Quantum inspired meta-heuristic approach for optimization of genetic algorithm. Comput Electr Eng 94:1–10. https://doi.org/10.1007/s10462-021-10042-y

Gao Z, Zhang M, Zhang LC (2022) Ship-unloading scheduling optimization with differential evolution. Inf Sci 591:88–102. https://doi.org/10.1016/j.ins.2021.12.110

Garcia S, Herrera F (2008) An extension on statistical comparisons of classifiers over multiple data sets for all pairwise comparisons. J Mach Learn Res 9:2677–2694. https://doi.org/10.1007/s00500-023-09046-3

Gholami J, Mardukhi F, Zawbaa HM (2021) An improved crow search algorithm for solving numerical optimization functions. Soft Comput 25(14):9441–9454. https://doi.org/10.1007/s00500-021-05827-w

Gyongyosi L, Imre S (2019) A survey on quantum computing technology. Comput Sci Rev 31:51–71. https://doi.org/10.1016/j.cosrev.2018.11.002

Hakli H, Kiran MS (2020) An improved artificial bee colony algorithm for balancing local and global search behaviors in continuous optimization. Int J Mach Learn Cybern 11(9):2051–2076. https://doi.org/10.1007/s13042-020-01094-7

Hong J, Shen B, Xue J, Pan A (2022) A vector-encirclement-model-based sparrow search algorithm for engineering optimization and numerical optimization problems. Appl Soft Comput. https://doi.org/10.1016/j.asoc.2022.109777

Huang X, Li C, Pu Y, He B (2019) Gaussian quantum bat algorithm with direction of mean best position for numerical function optimization. Comput Intell Neurosci 2019:1–19. https://doi.org/10.1155/2019/5652340

Huo F, Sun X, Ren W (2020) Multilevel image threshold segmentation using an improved bloch quantum artificial bee colony algorithm. Multim Tools Appl 79(3–4):2447–2471. https://doi.org/10.1007/s11042-019-08231-7

Kahraman HT, Akbel M, Duman S (2022) Optimization of optimal power flow problem using multi-objective manta ray foraging optimizer. Appl Soft Comput. https://doi.org/10.1016/j.asoc.2021.108334

Karaboga D (2005) An idea based on Honey Bee Swarm for numerical optimization. Technique report-TR06

Korkmaz TR, Bora S (2020) Adaptive modified artificial bee colony algorithms (amabc) for optimization of complex systems. Turk J Electr Eng Comput Sci 28(5):2602–2629. https://doi.org/10.3906/elk-1909-12

Kumar A, Wu GH, Ali MZ, Mallipeddi R, Suganthan PN, Das S (2020) A test-suite of non-convex constrained optimization problems from the real-world and some baseline results. Swarm Evol Comput. https://doi.org/10.1016/j.swevo.2020.100693

Li Y, He X, Zhang W (2020) The fractional difference form of sine chaotification model, chaos solitons fractals. Chaos Solitons Fractals. https://doi.org/10.1016/j.chaos.2020.109774

Li H, Gao K, Duan PY, Li JQ, Zhang L (2023) An improved artificial bee colony algorithm with q-learning for solving permutation flow-shop scheduling problems. IEEE Trans Syst Man Cybern 53(5):2684–2693. https://doi.org/10.1109/tsmc.2022.3219380

Li W, Jing J, Chen Y, Chen Y (2023) A cooperative particle swarm optimization with difference learning. Inf Sci. https://doi.org/10.1016/j.ins.2023.119238

Lockett AJ, Miikkulainen R (2017) A probabilistic reformulation of no free lunch: continuous lunches are not free. Evol Comput 25(3):503–528. https://doi.org/10.1162/evco_a_00196

Peng J, Li Y, Kang H, Shen Y, Sun X, Chen Q (2022) Impact of population topology on particle swarm optimization and its variants: An information propagation perspective. Swarm Evol Comput. https://doi.org/10.1016/j.swevo.2021.100990

Precup RE, Hedrea EL, Roman RC, Petriu EM, Szedlak-Stinean AI, Bojan-Dragosn CA (2021) Experiment-based approach to teach optimization techniques. IEEE Trans Educ 64(2):88–94. https://doi.org/10.1109/te.2020.3008878

Rodriguez L, Castillo O, Garcia M, Soria J. A new randomness approach based on sine waves to improve performance in metaheuristic algorithms. Soft Comput 24(16)

Santos R, Borges G, Santos A, Silva M, Sales C, Costa JCWA (2018) A semi-autonomous particle swarm optimizer based on gradient information and diversity control for global optimization. Appl Soft Comput 69:330–343. https://doi.org/10.1016/j.asoc.2018.04.027

Saravanan R, Ramabalan S, Ebenezer NGR, Dharmaraja C (2009) Evolutionary multi criteria design optimization of robot grippers. Appl Soft Comput 9(1):159–172. https://doi.org/10.1016/j.asoc.2008.04.001

Trawinski B, Smetek M, Telec Z, Lasota T (2012) Nonparametric statistical analysis for multiple comparison of machine learning regression algorithms. Int J Appl Math Comput Sci 22(4):867–881. https://doi.org/10.2478/v10006-012-0064-z

Wang H, Wang W, Zhou X, Zhao J, Wang Y, Xiao S, Xu M (2021) Artificial bee colony algorithm based on knowledge fusion. Complex Intell Syst 7(3):1139–1152. https://doi.org/10.1007/s40747-020-00171-2

Xu B, Gong D, Zhang Y, Yang S, Wang L, Fan ZYZ (2022) Cooperative co-evolutionary algorithm for multi-objective optimization problems with changing decision variables. Inf Sci 607:278–296. https://doi.org/10.1016/j.ins.2022.05.123

Yavuz Y, Durmus B, Aydin D (2022) Artificial bee colony algorithm with distant savants for constrained optimization. Appl Soft Comput 116:1–26. https://doi.org/10.1016/j.asoc.2021.108343

Yuan X, Wang P, Yuan Y, Huang Y, Zhang X (2019) A new quantum inspired chaotic artificial bee colony algorithm for optimal power flow problem. Energy Convers Manag 100:1–9. https://doi.org/10.1016/j.enconman.2015.04.051

Zhan ZH, Shi L, Tan KC, Zhang J (2022) A survey on evolutionary computation for complex continuous optimization. Artif Intell Rev 55(1):59–110. https://doi.org/10.1007/s10462-021-10042-y

Zheng Y, Li L, Qian L, Cheng B, Hou W, Zhuang Y (2023) Sine-ssa-bp ship trajectory prediction based on chaotic mapping improved sparrow search algorithm. Sensors. https://doi.org/10.3390/s23020704

Zhou J, Yao X, Lin Y, Chan FTS, Li Y (2018) An adaptive multi-population differential artificial bee colony algorithm for many-objective service composition in cloud manufacturing. Inf Sci 456:50–82. https://doi.org/10.1016/j.ins.2018.05.009

Zhou XY, Wu YL, Zhong MS, Wang MW (2021) Artificial bee colony algorithm based on adaptive neighborhood topologies. Inf Sci 610:1078–1101. https://doi.org/10.1016/j.ins.2022.08.001

Zhu G, Kwong S (2010) Gbest-guided artificial bee colony algorithm for numerical function optimization. Appl Math Comput 217(7):3166–3173. https://doi.org/10.1016/j.amc.2010.08.049

Download references

This work is funded by the Natural Science Foundation of Zhejiang Province (Grant No. LY22F030012), the National Natural Science Foundation of China (Grant No. 62003320) and Fundamental Research Funds for the Provincial Universities of Zhejiang (Grant No. 2021YW10).

Author information

Authors and affiliations.

College of Mechanical and Electrical Engineering, China Jiliang University, Hangzhou, 310018, China

Ruizi Ma, Junbao Gui, Jun Wen & Xu Guo

School of Electrical and Information Engineering, Tianjin University, Tianjin, 300072, China

Ningbo Yaohua Electric Company, Ningbo, 315324, China

You can also search for this author in PubMed Google Scholar

Contributions

Ruizi Ma provided the methodlogy and implemention of the research. Ruizi Ma and Junbao Gui write the paper. Jun Wen and Xu Guo edit the paper.

Corresponding author

Correspondence to Ruizi Ma .

Ethics declarations

Conflict of interest.

The authors have no Conflict of interest to declare that are relevant to the content of this article.

Additional information

Publisher's note.

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Since there are a lot of experimental data in this paper, which will affect the reading experience, these data are put in the Appendix.

See Tables 13 , 14 , 15 , 16 , 17 , 18 and 19 .

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

Reprints and permissions

About this article

Ma, R., Gui, J., Wen, J. et al. Chaos quantum bee colony algorithm for constrained complicate optimization problems and application of robot gripper. Soft Comput (2024). https://doi.org/10.1007/s00500-024-09877-8

Download citation

Accepted : 14 May 2024

Published : 23 July 2024

DOI : https://doi.org/10.1007/s00500-024-09877-8

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

- Artificial bee colony

- Cauchy factor

- Global optimizations

- Find a journal

- Publish with us

- Track your research

- Advanced Search

The integrated planning of outgoing coil selection for retrieval, multi-crane scheduling, and location assignment to the incoming and blocking coils in steel coil warehouses

New citation alert added.

This alert has been successfully added and will be sent to:

You will be notified whenever a record that you have chosen has been cited.

To manage your alert preferences, click on the button below.

New Citation Alert!

Please log in to your account

Information & Contributors

Bibliometrics & citations, view options, recommendations, multi-crane scheduling in steel coil warehouse.

This paper considers a multi-crane scheduling problem commonly encountered in real warehouse operations in steel enterprises. A given set of coils are to be retrieved to their designated places. If a required coil is in upper level or in lower level ...

A grasp algorithm for m-machine flowshop scheduling problem with bicriteria of makespan and maximum tardiness

In this paper we address the problem of minimizing the weighted sum of makespan and maximum tardiness in an m-machine flow shop environment. This is a NP-hard problem in the strong sense. An attempt has been made to solve this problem using a ...

Coil Batching to Improve Productivity and Energy Utilization in Steel Production

This paper investigates a practical batching decision problem that arises in the batch annealing operations in the cold rolling stage of steel production faced by most large iron and steel companies in the world. The problem is to select steel coils from ...

Information

Published in.

Pergamon Press, Inc.

United States

Publication History

Author tags.

- Outgoing coil selection

- Multi-crane scheduling

- Greedy algorithm

- GRASP algorithm

- Ant colony system algorithm

- Research-article

Contributors

Other metrics, bibliometrics, article metrics.

- 0 Total Citations

- 0 Total Downloads

- Downloads (Last 12 months) 0

- Downloads (Last 6 weeks) 0

View options

Login options.

Check if you have access through your login credentials or your institution to get full access on this article.

Full Access

Share this publication link.

Copying failed.

Share on social media

Affiliations, export citations.

- Please download or close your previous search result export first before starting a new bulk export. Preview is not available. By clicking download, a status dialog will open to start the export process. The process may take a few minutes but once it finishes a file will be downloadable from your browser. You may continue to browse the DL while the export process is in progress. Download

- Download citation

- Copy citation

We are preparing your search results for download ...

We will inform you here when the file is ready.

Your file of search results citations is now ready.

Your search export query has expired. Please try again.

IMAGES

VIDEO

COMMENTS

QuickMatch: A Very Fast Algorithm for the Assignment Problem by Yusin Lee and James B. Orlin Abstract In this paper, we consider the linear assignment problem defined on a bipartite network G = ( U V, A). The problem may be described as assigning each person in a set IU to a set V of tasks so as to minimize the total cost of the assignment. ...

The assignment problem is a fundamental combinatorial optimization problem. In its most general form, the problem is as follows: ... This is currently the fastest run-time of a strongly polynomial algorithm for this problem. If all weights are integers, ...

I need this part of the program to be as fast as possible. I'm wondering if there is an optimal algorithm I should use. I have been researching and came across the Hungarian algorithm but I'm wondering if there is another option I should be considering. Here is an example of the problem: My grid has its' positions labelled, a,b,c,d ...

The algorithm maintains a matching M and compatible prices p. Pf. Follows from Lemmas 2 and 3 and initial choice of prices. ! Theorem. The algorithm returns a min cost perfect matching. Pf. Upon termination M is a perfect matching, and p are compatible Optimality follows from Observation 2. ! Theorem. The algorithm can be implemented in O(n 3 ...

We'll handle the assignment problem with the Hungarian algorithm (or Kuhn-Munkres algorithm). I'll illustrate two different implementations of this algorithm, both graph theoretic, one easy and fast to implement with O (n4) complexity, and the other one with O (n3) complexity, but harder to implement.

Hungarian algorithm steps for minimization problem. Step 1: For each row, subtract the minimum number in that row from all numbers in that row. Step 2: For each column, subtract the minimum number in that column from all numbers in that column. Step 3: Draw the minimum number of lines to cover all zeroes.

The theoretical analysis and computational testing supports the hypothesis that QuickMatch runs in linear time on randomly generated sparse assignment problems, and presents some theoretical justifications as to why the algorithm's performance is superior in practice to the usual SSP algorithm. In this paper, we consider the linear assignment problem defined on a bipartite network G = ( U V, A).

The-scaling auction algorithm [5] and the Goldberg & Kennedy algorithm [13] are algorithms that solve the assignment problem. The -scaling auction algorithm operates like a real auction, where a set of persons U, compete for a set of objects V. In this scenario, to each object is assigned a price which, in certain sense, represents

Includes bibliographical references (p. 25-27). Alfred P. Sloan School of Management, Massachusetts Institute of Technology

Time complexity : O(n^3), where n is the number of workers and jobs. This is because the algorithm implements the Hungarian algorithm, which is known to have a time complexity of O(n^3). Space complexity : O(n^2), where n is the number of workers and jobs.This is because the algorithm uses a 2D cost matrix of size n x n to store the costs of assigning each worker to a job, and additional ...

From this, we could solve it as a transportation problem or as a linear program. However, we can also take advantage of the form of the problem and put together an algorithm that takes advantage of it- this is the Hungarian Algorithm. The Hungarian Algorithm The Hungarian Algorithm is an algorithm designed to solve the assignment problem. We ...

Mex implementation of Bertsekas' auction algorithm [1] for a very fast solution of the linear assignment problem. The implementation is optimised for sparse matrices where an element A (i,j) = 0 indicates that the pair (i,j) is not possible as assignment. Solving a sparse problem of size 950,000 by 950,000 with around 40,000,000 non-zero ...

It solves all LP problems and focus in development is to be fast on average on all LPs and also to be fast-ish in the pathological cases. When using the Hungarian method, you do not build a model, you just pass the cost matrix to a tailored algorithm. You will then use an algorithm developed for that specific problem to solve it.

It is worth noting, however, that the fastest known algorithms for solving high-multiplicity "flow-based" assignment problems run in Ω(mn) worst- case time, so our new results now provide a significant algorithmic incentive to model assignment problems as stable allocation problems rather than flow problems.

(The rectangular linear assignment problem, as defined here). I know this can be done by duplicating the ... Is my problem in fact $\Theta(m^3)$? I.e., is the method of duplicating workers and using Kuhn-Munkres (as fast as) the fastest algorithm for solving the rectangular linear assignment problem (RLAP)?. I want to know because I have a ...

October 2023. Arizona State University/SCAI Report. ignment Problem and Extensions ybyDimitri Bertsekas zAbstractWe consider the classical linear assignment problem, and we introduc. new auction algorithms for its optimal and suboptimal solution. The algorithms are founded on duality theory, and are related to ideas of competitive bidding by ...

This is a Python implementation of an algorithm for approximately solving quadratic assignment problems described in. Joshua T. Vogelstein and John M. Conroy and Vince Lyzinski and Louis J. Podrazik and Steven G. Kratzer and Eric T. Harley and Donniell E. Fishkind and R. Jacob Vogelstein and Carey E. Priebe (2012) Fast Approximate Quadratic Programming for Large (Brain) Graph Matching.

There are a few papers which have fast algorithms for weighted bipartite graphs. A recent paper Ramshaw and Tarjan, 2012 "On Minimum-Cost Assignments in Unbalanced Bipartite Graphs" presents an algorithm called FlowAssign and Refine that solves for the min-cost, unbalanced, bipartite assignment problem and uses weight scaling to solve the perfect and imperfect assignment problems, but not ...

2. I want to solve job assignment problem using Hungarian algorithm of Kuhn and Munkres in case when matrix is not square. Namely we have more jobs than workers. In this case adding additional row is recommended to make matrix square. For example in the following link. And here task IV is assumed to be done.

This paper describes a new algorithm called QuickMiatch for solving the assignment problem. QuickMatch is based on the successive shortest path (SSP) algorithm for the assignment problem, which in ...

I need this part of the program to be as fast as possible. I'm wondering if there is an optimal algorithm I should use. In my research I have come across the Hungarian algorithm, but I'm wondering if there is another option I should be considering. Here is an example of the problem: My grid has its' positions labelled, a,b,c,d ...

What you're trying to solve here is known as the assignment problem: given two lists of n elements each and n×n values (the value of each pair), how to assign them so that the total "value" is maximized (or equivalently, minimized). There are several algorithms for this, such as the Hungarian algorithm ( Python implementation ), or you could ...

S1: We find the best assignment A such that A(v1) ≠ A1(v1) using one invocation of the Hungarian algorithm, by changing the cost of the edge (v1,A1(v1)) to ∞ (or some very large positive number) and then re-running the Hungarian algorithm. This finds the best assignment out of all assignments that assign v1 to something different than A1 did.

Multi-Objective Assignment Problems in Time-Critical Se˛ings: An Application in Air Tra˙ic Flow Management ... Amrit Pratap, and T. Meyarivan. 2000. A Fast Elitist Non-dominated Sorting Genetic Algorithm for Multi-objective Optimiza-tion: NSGA-II. In Parallel Problem Solving from Nature PPSN VI, Marc Schoenauer, ... algorithms for the bi ...

Global constrained optimization problems are very complex for engineering applications. To solve complicated and constrained optimization problems with fast convergence and accurate computations, a new quantum artificial bee colony algorithm using Sin chaos and Cauchy factor (SCQABC) is proposed. The algorithm introduces a quantum bit to initialize the population, which is then updated by the ...

First, a mixed integer linear programming model is proposed. Further, a Greedy heuristic algorithm and two metaheuristics are developed to solve large-sized instances of the problem. Metaheuristics include a Greedy Randomized Adaptive Search Procedure (GRASP) and a hybrid algorithm that combines Ant Colony System with the GRASP (ACS-GRASP).