{{ activeMenu.name }}

- Python Courses

- JavaScript Courses

- Artificial Intelligence Courses

- Data Science Courses

- React Courses

- Ethical Hacking Courses

- View All Courses

Fresh Articles

- Python Projects

- JavaScript Projects

- Java Projects

- HTML Projects

- C++ Projects

- PHP Projects

- View All Projects

- Python Certifications

- JavaScript Certifications

- Linux Certifications

- Data Science Certifications

- Data Analytics Certifications

- Cybersecurity Certifications

- View All Certifications

- IDEs & Editors

- Web Development

- Frameworks & Libraries

- View All Programming

- View All Development

- App Development

- Game Development

- Courses, Books, & Certifications

- Data Science

- Data Analytics

- Artificial Intelligence (AI)

- Machine Learning (ML)

- View All Data, Analysis, & AI

- Networking & Security

- Cloud, DevOps, & Systems

- Recommendations

- Crypto, Web3, & Blockchain

- User-Submitted Tutorials

- View All Blog Content

- JavaScript Online Compiler

- HTML & CSS Online Compiler

- Certifications

- Programming

- Development

- Data, Analysis, & AI

- Online JavaScript Compiler

- Online HTML Compiler

Don't have an account? Sign up

Forgot your password?

Already have an account? Login

Have you read our submission guidelines?

Go back to Sign In

Normalization in DBMS: 1NF, 2NF, 3NF, and BCNF [Examples]

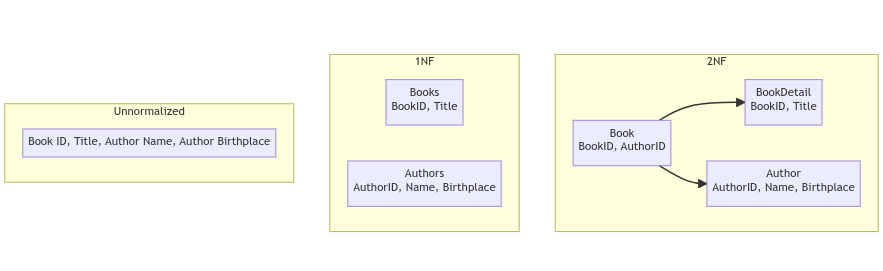

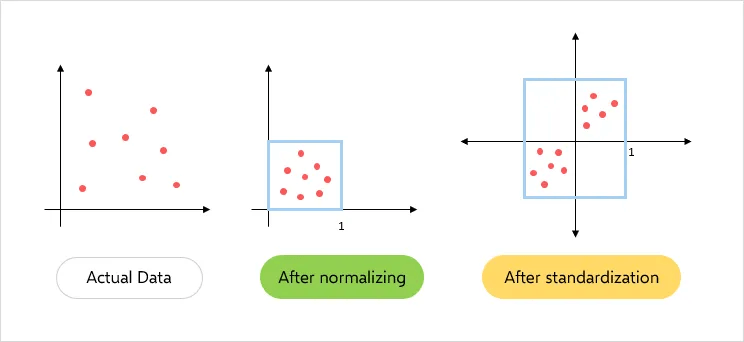

When developing the schema of a relational database, one of the most important aspects to be taken into account is to ensure that the duplication of data is minimized. We do this by carrying out database normalization, an important part of the database schema design process.

Here, we explain normalization in DBMS, explaining 1NF, 2NF, 3NF, and BCNF with explanations. First, let’s take a look at what normalization is and why it is important.

- What is Normalization in DBMS?

Database normalization is a technique that helps design the schema of the database in an optimal way. The core idea of database normalization is to divide the tables into smaller subtables and store pointers to data rather than replicating it.

Why Do We Carry out Database Normalization?

There are two primary reasons why database normalization is used. First, it helps reduce the amount of storage needed to store the data. Second, it prevents data conflicts that may creep in because of the existence of multiple copies of the same data.

If a database isn’t normalized, then it can result in less efficient and generally slower systems, and potentially even inaccurate data. It may also lead to excessive disk I/O usage and bad performance.

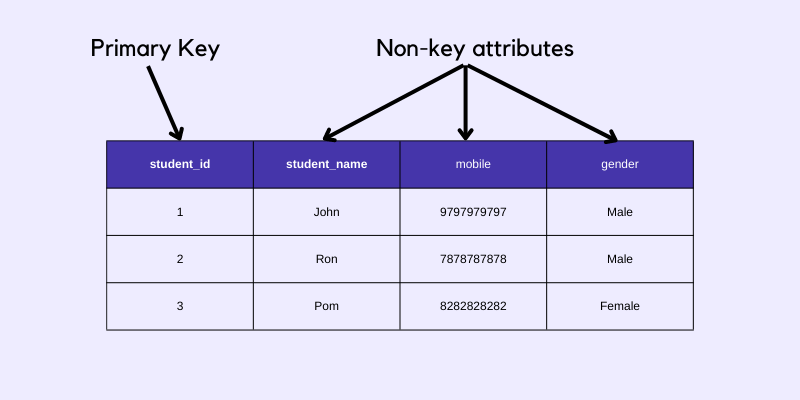

- What is a Key?

You should also be aware of what a key is. A key is an attribute that helps identify a row in a table. There are seven different types, which you’ll see used in the explanation of the various normalizations:

- Candidate Key

- Primary Key

- Foreign Key

- Alternate Key

- Composite Key

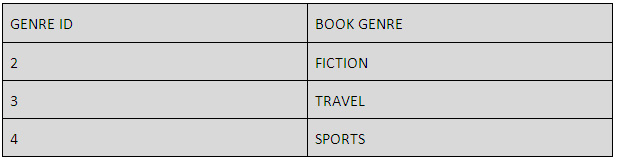

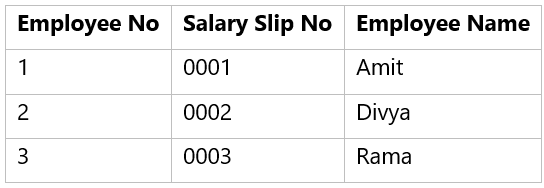

- Database Normalization Example

To understand (DBMS)normalization with example tables, let's assume that we are storing the details of courses and instructors in a university. Here is what a sample database could look like:

|

|

|

|

|

| CS101 | Lecture Hall 20 | Prof. George | +1 6514821924 |

| CS152 | Lecture Hall 21 | Prof. Atkins | +1 6519272918 |

| CS154 | CS Auditorium | Prof. George | +1 6514821924 |

Here, the data basically stores the course code, course venue, instructor name, and instructor’s phone number. At first, this design seems to be good. However, issues start to develop once we need to modify information. For instance, suppose, if Prof. George changed his mobile number. In such a situation, we will have to make edits in 2 places.

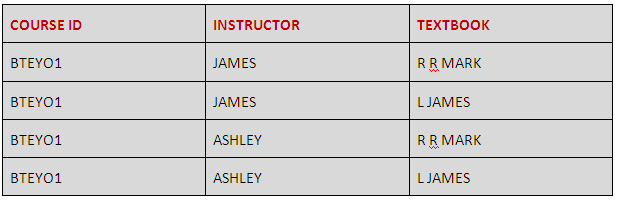

What if someone just edited the mobile number against CS101, but forgot to edit it for CS154? This will lead to stale/wrong information in the database. This problem can be easily tackled by dividing our table into 2 simpler tables:

Table 1 (Instructor):

- Instructor ID

- Instructor Name

- Instructor mobile number

Table 2 (Course):

- Course code

- Course venue

Now, our data will look like the following:

|

|

|

|

| 1 | Prof. George | +1 6514821924 |

| 2 | Prof. Atkins | +1 6519272918 |

|

|

|

|

| CS101 | Lecture Hall 20 | 1 |

| CS152 | Lecture Hall 21 | 2 |

| CS154 | CS Auditorium | 1 |

Basically, we store the instructors separately and in the course table, we do not store the entire data of the instructor. Rather, we store the ID of the instructor. Now, if someone wants to know the mobile number of the instructor, they can simply look up the instructor table. Also, if we were to change the mobile number of Prof. George, it can be done in exactly one place. This avoids the stale/wrong data problem.

Further, if you observe, the mobile number now need not be stored 2 times. We have stored it in just 1 place. This also saves storage. This may not be obvious in the above simple example. However, think about the case when there are hundreds of courses and instructors and for each instructor, we have to store not just the mobile number, but also other details like office address, email address, specialization, availability, etc. In such a situation, replicating so much data will increase the storage requirement unnecessarily.

Suggested Course

Database Management System (DBMS) & SQL : Complete Pack 2024

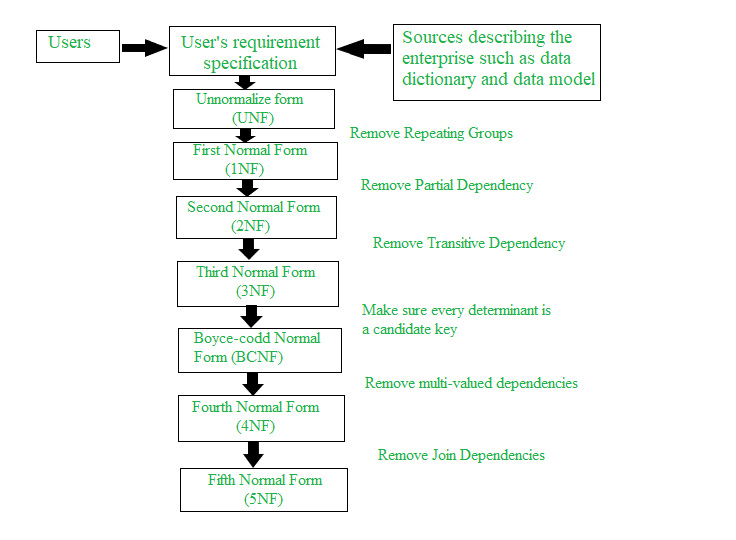

- Types of DBMS Normalization

There are various normal forms in DBMS. Each normal form has an importance that helps optimize the database to save storage and reduce redundancies. We explain normalization in DBMS with examples below.

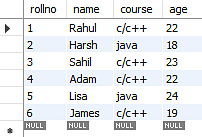

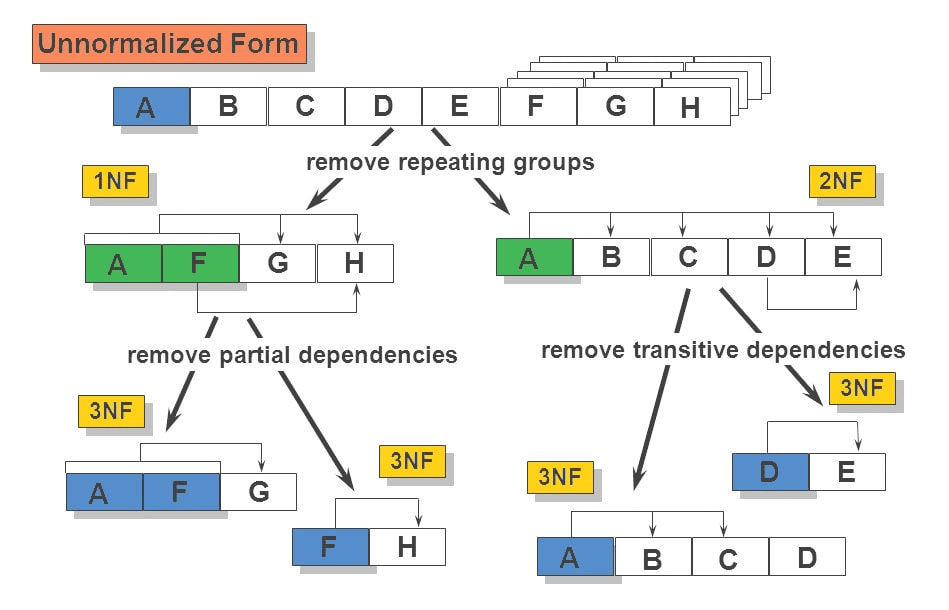

First Normal Form (1NF)

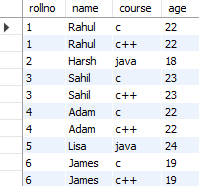

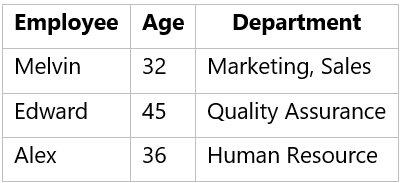

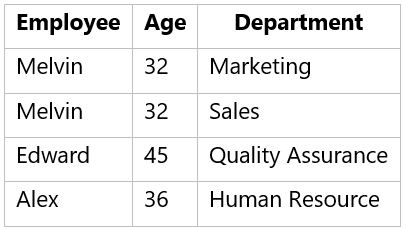

The first normal form simply says that each cell of a table should contain exactly one value. Assume we are storing the courses that a particular instructor takes, we can store it like this:

|

|

|

| Prof. George | (CS101, CS154) |

| Prof. Atkins | (CS152) |

Here, the issue is that in the first row, we are storing 2 courses against Prof. George. This isn’t the optimal way since that’s now how SQL databases are designed to be used. A better method would be to store the courses separately. For instance:

|

|

|

| Prof. George | CS101 |

| Prof. George | CS154 |

| Prof. Atkins | CS152 |

This way, if we want to edit some information related to CS101, we do not have to touch the data corresponding to CS154. Also, observe that each row stores unique information. There is no repetition. This is the First Normal Form.

Data redundancy is higher in 1NF because there are multiple columns with the same in multiple rows. 1NF is not so focused on eliminating redundancy as much as it is focused on eliminating repeating groups.

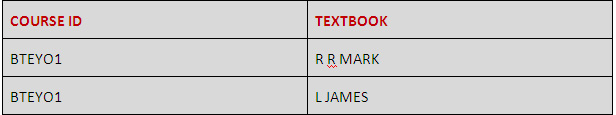

Second Normal Form (2NF)

For a table to be in second normal form, the following 2 conditions must be met:

- The table should be in the first normal form.

- The primary key of the table should have exactly 1 column.

The first point is obviously straightforward since we just studied 1NF. Let us understand the second point: a 1-column primary key. A primary key is a set of columns that uniquely identifies a row. Here, no 2 rows have the same primary keys.

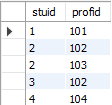

In this table, the course code is unique so that becomes our primary key. Let us take another example of storing student enrollment in various courses. Each student may enroll in multiple courses. Similarly, each course may have multiple enrollments. A sample table may look like this (student name and course code):

|

|

|

| Rahul | CS152 |

| Rajat | CS101 |

| Rahul | CS154 |

| Raman | CS101 |

Here, the first column is the student name and the second column is the course taken by the student.

Clearly, the student name column isn’t unique as we can see that there are 2 entries corresponding to the name ‘Rahul’ in row 1 and row 3. Similarly, the course code column is not unique as we can see that there are 2 entries corresponding to course code CS101 in row 2 and row 4.

However, the tuple (student name, course code) is unique since a student cannot enroll in the same course more than once. So, these 2 columns when combined form the primary key for the database.

As per the second normal form definition, our enrollment table above isn’t in the second normal form. To achieve the same (1NF to 2NF), we can rather break it into 2 tables:

|

|

|

| Rahul | 1 |

| Rajat | 2 |

| Raman | 3 |

Here the second column is unique and it indicates the enrollment number for the student. Clearly, the enrollment number is unique. Now, we can attach each of these enrollment numbers with course codes.

|

|

|

| CS101 | 2 |

| CS101 | 3 |

| CS152 | 1 |

| CS154 | 1 |

These 2 tables together provide us with the exact same information as our original table.

Third Normal Form (3NF)

Before we delve into the details of third normal form, let us understand the concept of a functional dependency on a table.

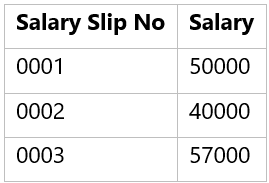

Column A is said to be functionally dependent on column B if changing the value of A may require a change in the value of B. As an example, consider the following table:

|

|

|

|

|

| MA214 | Lecture Hall 18 | Prof. George | CS Department |

| ME112 | Auditorium building | Prof. John | Electronics Department |

Here, the department column is dependent on the professor name column. This is because if in a particular row, we change the name of the professor, we will also have to change the department value. As an example, suppose MA214 is now taken by Prof. Ronald who happens to be from the mathematics department, the table will look like this:

|

|

|

|

|

| MA214 | Lecture Hall 18 | Prof. Ronald | Mathematics Department |

| ME112 | Auditorium building | Prof. John | Electronics Department |

Here, when we changed the name of the professor, we also had to change the department column. This is not desirable since someone who is updating the database may remember to change the name of the professor, but may forget updating the department value. This can cause inconsistency in the database.

Third normal form avoids this by breaking this into separate tables:

|

|

|

|

| MA214 | Lecture Hall 18 | 1 |

| ME112 | Auditorium building, | 2 |

Here, the third column is the ID of the professor who’s taking the course.

|

|

|

|

| 1 | Prof. Ronald | Mathematics Department |

| 2 | Prof. John | Electronics Department |

Here, in the above table, we store the details of the professor against his/her ID. This way, whenever we want to reference the professor somewhere, we don’t have to put the other details of the professor in that table again. We can simply use the ID.

Therefore, in the third normal form, the following conditions are required:

- The table should be in the second normal form.

- There should not be any functional dependency.

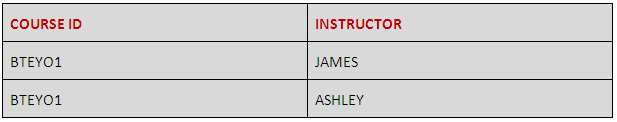

Boyce-Codd Normal Form (BCNF)

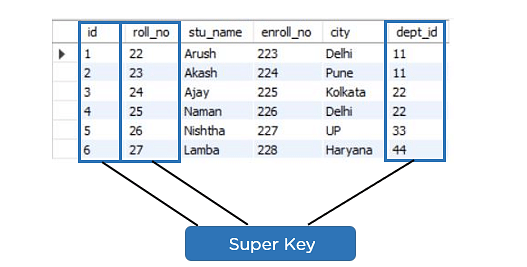

The Boyce-Codd Normal form is a stronger generalization of the third normal form. A table is in Boyce-Codd Normal form if and only if at least one of the following conditions are met for each functional dependency A → B:

- A is a superkey

- It is a trivial functional dependency.

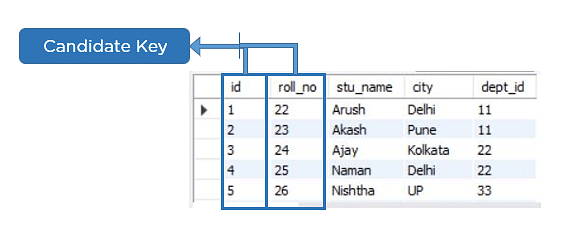

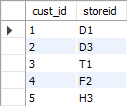

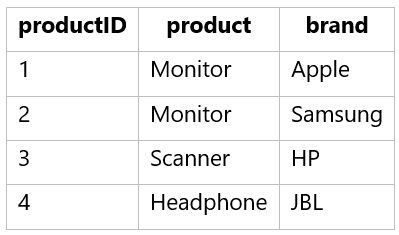

Let us first understand what a superkey means. To understand BCNF in DBMS, consider the following BCNF example table:

Here, the first column (course code) is unique across various rows. So, it is a superkey. Consider the combination of columns (course code, professor name). It is also unique across various rows. So, it is also a superkey. A superkey is basically a set of columns such that the value of that set of columns is unique across various rows. That is, no 2 rows have the same set of values for those columns. Some of the superkeys for the table above are:

- Course code, professor name

- Course code, professor mobile number

A superkey whose size (number of columns) is the smallest is called a candidate key. For instance, the first superkey above has just 1 column. The second one and the last one have 2 columns. So, the first superkey (Course code) is a candidate key.

Boyce-Codd Normal Form says that if there is a functional dependency A → B, then either A is a superkey or it is a trivial functional dependency. A trivial functional dependency means that all columns of B are contained in the columns of A. For instance, (course code, professor name) → (course code) is a trivial functional dependency because when we know the value of course code and professor name, we do know the value of course code and so, the dependency becomes trivial.

Let us understand what’s going on:

A is a superkey: this means that only and only on a superkey column should it be the case that there is a dependency of other columns. Basically, if a set of columns (B) can be determined knowing some other set of columns (A), then A should be a superkey. Superkey basically determines each row uniquely.

It is a trivial functional dependency: this means that there should be no non-trivial dependency. For instance, we saw how the professor’s department was dependent on the professor’s name. This may create integrity issues since someone may edit the professor’s name without changing the department. This may lead to an inconsistent database.

Another example would be if a company had employees who work in more than one department. The corresponding database can be decomposed into where the functional dependencies could be such keys as employee ID and employee department.

Fourth normal form

A table is said to be in fourth normal form if there is no two or more, independent and multivalued data describing the relevant entity.

Fifth normal form

A table is in fifth normal form if:

- It is in its fourth normal form.

- It cannot be subdivided into any smaller tables without losing some form of information.

- Normalization is Important for Database Systems

Normalization in DBMS is useful for designing the schema of a database such that there is no data replication which may possibly lead to inconsistencies. While designing the schema for applications, we should always think about how we can make use of these forms.

If you want to learn more about SQL , check out our post on the best SQL certifications . You can also read about SQL vs MySQL to learn about what the two are. To become a data engineer , you’ll need to learn about normalization and a lot more, so get started today.

- Frequently Asked Questions

1. Does database normalization reduce the database size?

Yes, database normalization does reduce database size. Redundant data is removed, so the database disk storage use becomes smaller.

2. Which normal form can remove all the anomalies in DBMS?

5NF will remove all anomalies. However, generally, most 3NF tables will be free from anomalies.

3. Can database normalization reduce the number of tables?

Database normalization increases the number of tables. This is because we split tables into sub-tables in order to eliminate redundant data.

4. What is the Difference between BCNF and 3NF?

BCNF is an extension of 3NF. The primary difference is that it removes the transitive dependency from a relation.

People are also reading:

- SQL Courses

- SQL Certifications

- Download SQL Cheat Sheet PDF

- Top DBMS Interview Questions & Answers

- Difference between MongoDB vs MySQL

- Create Database in MySQL

- What is Stored Procedure?

- Difference between OLTP vs OLAP

- What is MongoDB?

- Basic SQL Command

Entrepreneur, Coder, Speed-cuber, Blogger, fan of Air crash investigation! Aman Goel is a Computer Science Graduate from IIT Bombay. Fascinated by the world of technology he went on to build his own start-up - AllinCall Research and Solutions to build the next generation of Artificial Intelligence, Machine Learning and Natural Language Processing based solutions to power businesses.

Subscribe to our Newsletter for Articles, News, & Jobs.

Disclosure: Hackr.io is supported by its audience. When you purchase through links on our site, we may earn an affiliate commission.

In this article

- Download SQL Injection Cheat Sheet PDF for Quick References SQL Cheat Sheets

- SQL vs MySQL: What’s the Difference and Which One to Choose SQL MySQL

- What is SQL? A Beginner's Definitive Guide SQL

Please login to leave comments

This video might be helpful to you: https://www.youtube.com/watch?v=B5r8CcTUs5Y

4 years ago

Sagar Jaybhay

Very very nice explanation

Tiago Mendes

Thank you for your the tutorial, it was explained well and easy to folow!

2 years ago

nice explanation

4 months ago

Always be in the loop.

Get news once a week, and don't worry — no spam.

{{ errors }}

{{ message }}

- Help center

- We ❤️ Feedback

- Advertise / Partner

- Write for us

- Privacy Policy

- Cookie Policy

- Change Privacy Settings

- Disclosure Policy

- Terms and Conditions

- Refund Policy

Disclosure: This page may contain affliate links, meaning when you click the links and make a purchase, we receive a commission.

Database Normalization: A Step-By-Step-Guide With Examples

Database normalisation is a concept that can be hard to understand.

But it doesn’t have to be.

In this article, I’ll explain what normalisation in a DBMS is and how to do it, in simple terms.

By the end of the article, you’ll know all about it and how to do it.

So let’s get started.

Want to improve your database modelling skills? Click here to get my Database Normalisation Checklist: a list of things to do as you normalise or design your database!

Table of Contents

What Is Database Normalization?

Database normalisation, or just normalisation as it’s commonly called, is a process used for data modelling or database creation, where you organise your data and tables so it can be added and updated efficiently.

It’s something a person does manually, as opposed to a system or a tool doing it. It’s commonly done by database developers and database administrators.

It can be done on any relational database , where data is stored in tables that are linked to each other. This means that normalization in a DBMS (Database Management System) can be done in Oracle, Microsoft SQL Server, MySQL, PostgreSQL and any other type of database.

To perform the normalization process, you start with a rough idea of the data you want to store, and apply certain rules to it in order to get it to a more efficient form.

I’ll show you how to normalise a database later in this article.

Why Normalize a Database?

So why would anyone want to normalize their database?

Why do we want to go through this manual process of rearranging the data?

There are a few reasons we would want to go through this process:

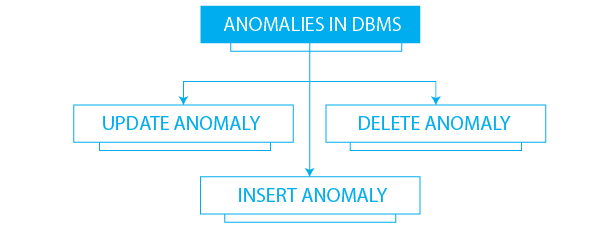

- Make the database more efficient

- Prevent the same data from being stored in more than one place (called an “insert anomaly”)

- Prevent updates being made to some data but not others (called an “update anomaly”)

- Prevent data not being deleted when it is supposed to be, or from data being lost when it is not supposed to be (called a “delete anomaly”)

- Ensure the data is accurate

- Reduce the storage space that a database takes up

- Ensure the queries on a database run as fast as possible

Normalization in a DBMS is done to achieve these points. Without normalization on a database, the data can be slow, incorrect, and messy .

Data Anomalies

Some of these points above relate to “anomalies”.

An anomaly is where there is an issue in the data that is not meant to be there . This can happen if a database is not normalised.

Let’s take a look at the different kinds of data anomalies that can occur and that can be prevented with a normalised database.

Our Example

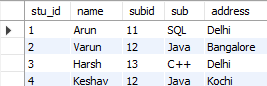

We’ll be using a student database as an example in this article, which records student, class, and teacher information.

Let’s say our student database looks like this:

| 1 | John Smith | 200 | Economics | Economics 1 | Biology 1 | |

| 2 | Maria Griffin | 500 | Computer Science | Biology 1 | Business Intro | Programming 2 |

| 3 | Susan Johnson | 400 | Medicine | Biology 2 | ||

| 4 | Matt Long | 850 | Dentistry |

This table keeps track of a few pieces of information:

- The student names

- The fees a student has paid

- The classes a student is taking, if any

This is not a normalised table, and there are a few issues with this.

Insert Anomaly

An insert anomaly happens when we try to insert a record into this table without knowing all the data we need to know.

For example, if we wanted to add a new student but did not know their course name.

The new record would look like this:

| 1 | John Smith | 200 | Economics | Economics 1 | Biology 1 | |

| 2 | Maria Griffin | 500 | Computer Science | Biology 1 | Business Intro | Programming 2 |

| 3 | Susan Johnson | 400 | Medicine | Biology 2 | ||

| 4 | Matt Long | 850 | Dentistry | |||

We would be adding incomplete data to our table, which can cause issues when trying to analyse this data.

Update Anomaly

An update anomaly happens when we want to update data, and we update some of the data but not other data.

For example, let’s say the class Biology 1 was changed to “Intro to Biology”. We would have to query all of the columns that could have this Class field and rename each one that was found.

| 1 | John Smith | 200 | Economics | Economics 1 | ||

| 2 | Maria Griffin | 500 | Computer Science | Business Intro | Programming 2 | |

| 3 | Susan Johnson | 400 | Medicine | Biology 2 | ||

| 4 | Matt Long | 850 | Dentistry |

There’s a risk that we miss out on a value, which would cause issues.

Ideally, we would only update the value once, in one location.

Delete Anomaly

A delete anomaly occurs when we want to delete data from the table, but we end up deleting more than what we intended.

For example, let’s say Susan Johnson quits and her record needs to be deleted from the system. We could delete her row:

| 1 | John Smith | 200 | Economics | Economics 1 | Biology 1 | |

| 2 | Maria Griffin | 500 | Computer Science | Biology 1 | Business Intro | Programming 2 |

| 4 | Matt Long | 850 | Dentistry |

But, if we delete this row, we lose the record of the Biology 2 class, because it’s not stored anywhere else. The same can be said for the Medicine course.

We should be able to delete one type of data or one record without having impacts on other records we don’t want to delete.

What Are The Normal Forms?

The process of normalization involves applying rules to a set of data. Each of these rules transforms the data to a certain structure, called a normal form .

There are three main normal forms that you should consider (Actually, there are six normal forms in total, but the first three are the most common).

Whenever the first rule is applied, the data is in “ first normal form “. Then, the second rule is applied and the data is in “ second normal form “. The third rule is then applied and the data is in “ third normal form “.

Fourth and fifth normal forms are then achieved from their specific rules.

Alright, so there are three main normal forms that we’re going to look at. I’ve written a post on designing a database , but let’s see what is involved in getting to each of the normal forms in more detail.

What Is First Normal Form?

First normal form is the way that your data is represented after it has the first rule of normalization applied to it. Normalization in DBMS starts with the first rule being applied – you need to apply the first rule before applying any other rules.

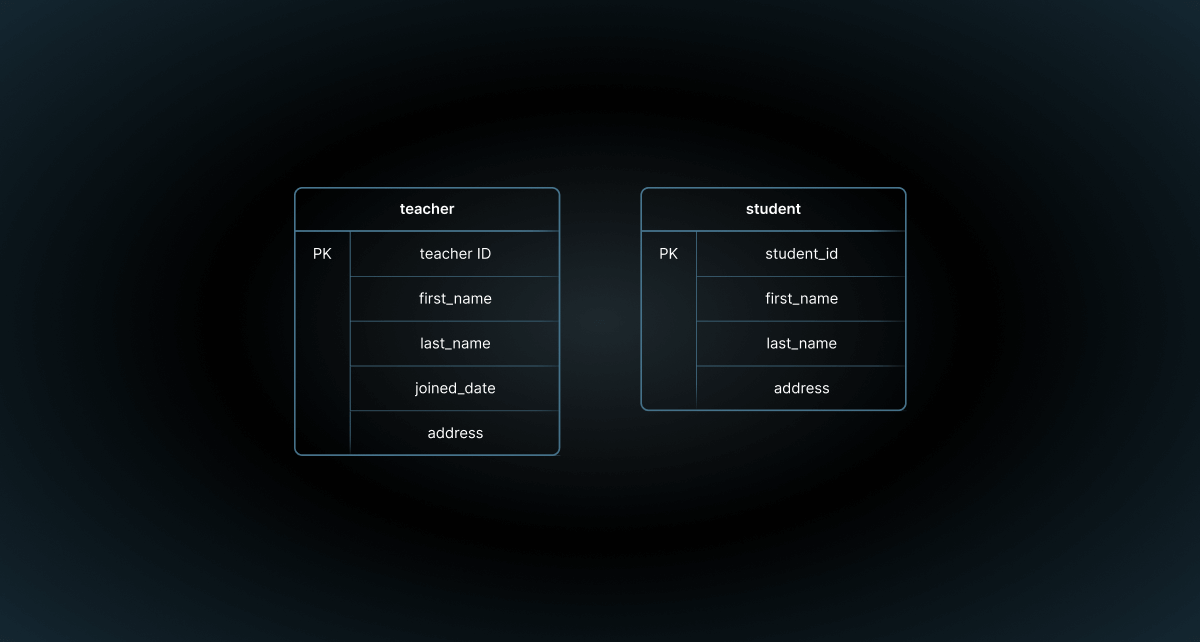

Let’s start with a sample database. In this case, we’re going to use a student and teacher database at a school. We mentioned this earlier in the article when we spoke about anomalies, but here it is again.

Our Example Database

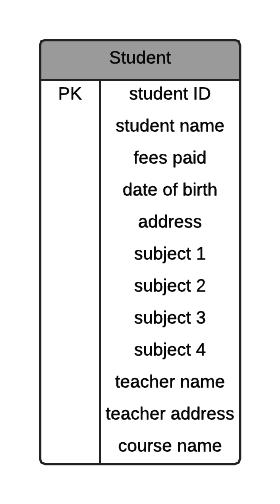

We have a set of data we want to capture in our database, and this is how it currently looks. It’s a single table called “student” with a lot of columns.

| John Smith | 18-Jul-00 | 04-Aug-91 | 3 Main Street, North Boston 56125 | Economics 1 (Business) | Biology 1 (Science) | James Peterson | 44 March Way, Glebe 56100 | Economics | ||

| Maria Griffin | 14-May-01 | 10-Sep-92 | 16 Leeds Road, South Boston 56128 | Biology 1 (Science) | Business Intro (Business) | Programming 2 (IT) | James Peterson | 44 March Way, Glebe 56100 | Computer Science | |

| Susan Johnson | 03-Feb-01 | 13-Jan-91 | 21 Arrow Street, South Boston 56128 | Biology 2 (Science) | Sarah Francis | Medicine | ||||

| Matt Long | 29-Apr-02 | 25-Apr-92 | 14 Milk Lane, South Boston 56128 | Shane Cobson | 105 Mist Road, Faulkner 56410 | Dentistry |

Everything is in one table.

How can we normalise this?

We start with getting the data to First Normal Form.

To apply first normal form to a database, we look at each table, one by one, and ask ourselves the following questions of it:

Does the combination of all columns make a unique row every single time?

What field can be used to uniquely identify the row?

Let’s look at the first question.

No. There could be the same combination of data, and it would represent a different row. There could be the same values for this row and it would be a separate row (even though it is rare).

The second question says:

Is this the student name? No, as there could be two students with the same name.

Address? No, this isn’t unique either.

Any other field?

We don’t have a field that can uniquely identify the row.

If there is no unique field, we need to create a new field. This is called a primary key, and is a database term for a field that is unique to a single row. (Related: The Complete Guide to Database Keys )

When we create a new primary key, we can call it whatever we like, but it should be obvious and consistently named between tables. I prefer using the ID suffix, so I would call it student ID.

This is our new table:

Student ( student ID , student name, fees paid, date of birth, address, subject 1, subject 2, subject 3, subject 4, teacher name, teacher address, course name)

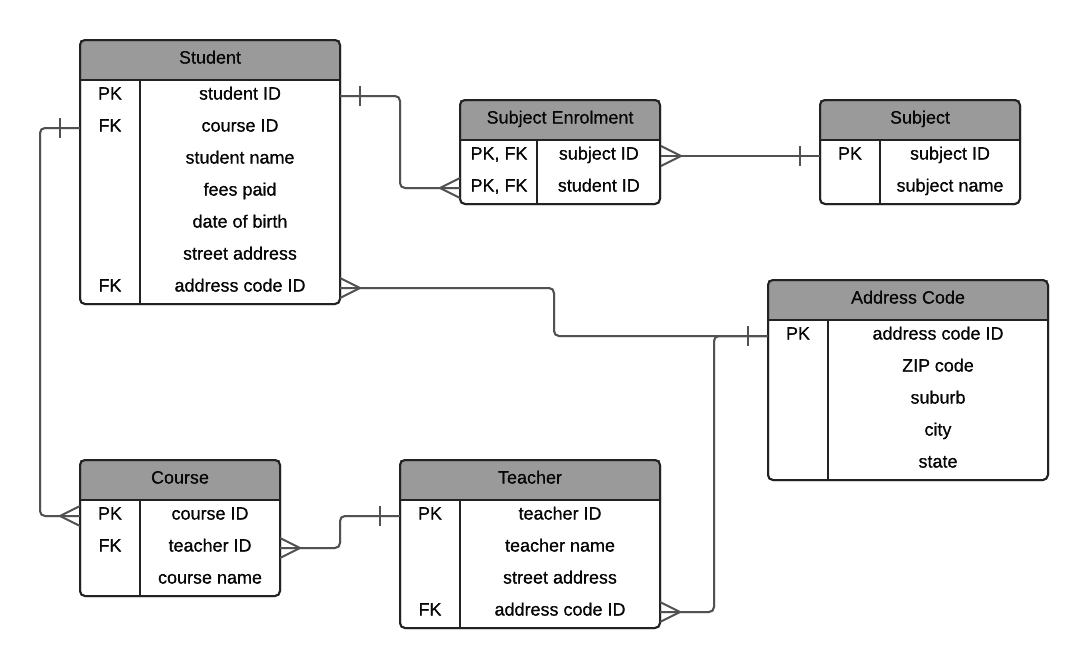

This can also be represented in an Entity Relationship Diagram (ERD):

The way I have written this is a common way of representing tables in text format. The table name is written, and all of the columns are shown in brackets, with the primary key underlined.

This data is now in first normal form.

This example is still in one table, but it’s been made a little better by adding a unique value to it.

Want to find a tool that creates these kinds of diagrams? There are many tools for creating these kinds of diagrams. I’ve listed 76 of them in this guide to Data Modeling Tools , along with reviews, price, and other features. So if you’re looking for one to use, take a look at that list.

What Is Second Normal Form?

The rule of second normal form on a database can be described as:

- Fulfil the requirements of first normal form

- Each non-key attribute must be functionally dependent on the primary key

What does this even mean?

It means that the first normal form rules have been applied. It also means that each field that is not the primary key is determined by that primary key , so it is specific to that record. This is what “functional dependency” means.

Let’s take a look at our table.

Are all of these columns dependent on and specific to the primary key?

The primary key is student ID, which represents the student. Let’s look at each column:

- student name: Yes, this is dependent on the primary key. A different student ID means a different student name.

- fees paid: Yes, this is dependent on the primary key. Each fees paid value is for a single student.

- date of birth: Yes, it’s specific to that student.

- address: Yes, it’s specific to that student.

- subject 1: No, this column is not dependent on the student. More than one student can be enrolled in one subject.

- subject 2: As above, more than one subject is allowed.

- subject 3: No, same rule as subject 2.

- subject 4: No, same rule as subject 2

- teacher name: No, the teacher name is not dependent on the student.

- teacher address: No, the teacher address is not dependent on the student.

- course name: No, the course name is not dependent on the student.

We have a mix of Yes and No here. Some fields are dependent on the student ID, and others are not.

How can we resolve those we marked as No?

Let’s take a look.

First, the subject 1 column. It is not dependent on the student, as more than one student can have a subject, and the subject isn’t a part of the definition of a student.

So, we can move it to a new table:

Subject (subject name)

I’ve called it subject name because that’s what the value represents. When we are writing queries on this table or looking at diagrams, it’s clearer what subject name is instead of using subject.

Now, is this field unique? Not necessarily. Two subjects could have the same name and this would cause problems in our data.

So, what do we do? We add a primary key column, just like we did for student. I’ll call this subject ID, to be consistent with the student ID.

Subject ( subject ID , subject name)

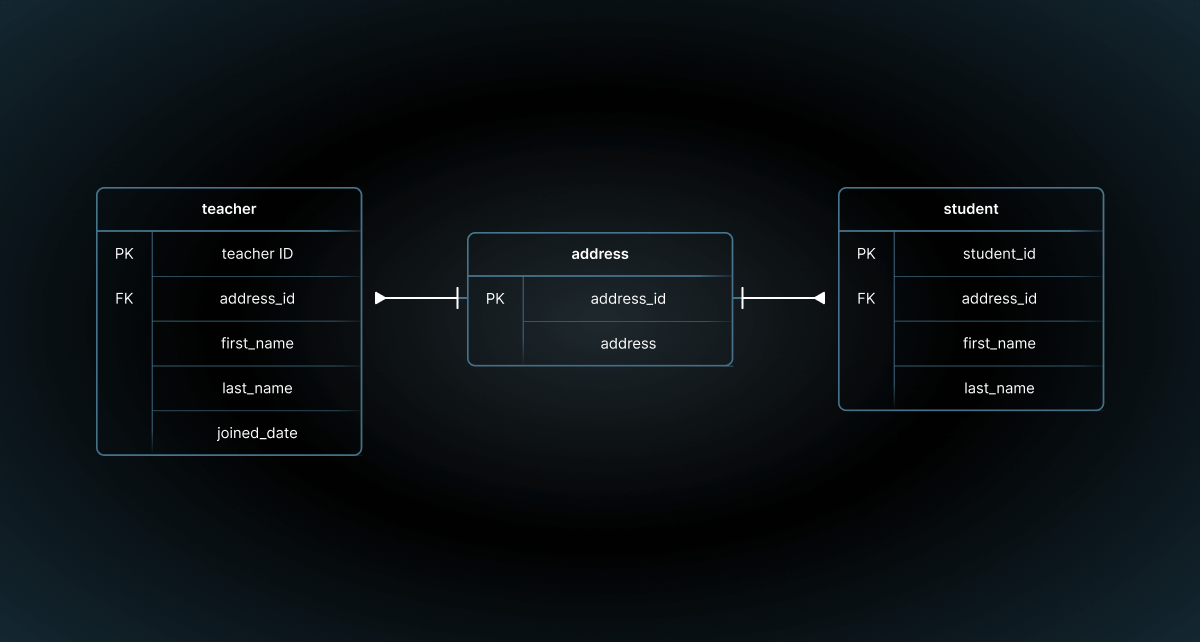

This means we have a student table and a subject table. We can do this for all four of our subject columns in the student table, removing them from the student table so it looks like this:

Student ( student ID , student name, fees paid, date of birth, address, teacher name, teacher address, course name)

But they are in separate tables. How do we link them together?

We’ll cover that shortly. For now, let’s keep going with our student table.

The next column we marked as No was the Teacher Name column. The teacher is separate to the student so should be captured separately. This means we should move it to its own table.

Teacher (teacher name)

We should also move the teacher address to this table, as it’s a property of the teacher. I’ll also rename teacher address to be just address.

Teacher (teacher name, address)

Just like with the subject table, the teacher name and address is not unique. Sure, in most cases it would be, but to avoid duplication we should add a primary key. Let’s call it teacher ID,

Teacher ( teacher ID , teacher name, address)

The last column we have to look at was the Course Name column. This indicates the course that the student is currently enrolled in.

While the course is related to the student (a student is enrolled in a course), the name of the course itself is not dependent on the student.

So, we should move it to a separate table. This is so any changes to courses can be made independently of students.

The course table would look like this:

Course (course name)

Let’s also add a primary key called course ID.

Course ( course ID , course name)

We now have our tables created from columns that were in the student table. Our database so far looks like this:

Student ( student ID , student name, fees paid, date of birth, address) Subject ( subject ID , subject name) Teacher ( teacher ID , teacher name, address) Course ( course ID , course name)

Using the data from the original table, our data could look like this:

| 1 | John Smith | 18-Jul-00 | 04-Aug-91 | 3 Main Street, North Boston 56125 |

| 2 | Maria Griffin | 14-May-01 | 10-Sep-92 | 16 Leeds Road, South Boston 56128 |

| 3 | Susan Johnson | 03-Feb-01 | 13-Jan-91 | 21 Arrow Street, South Boston 56128 |

| 4 | Matt Long | 29-Apr-02 | 25-Apr-92 | 14 Milk Lane, South Boston 56128 |

| 1 | Economics 1 (Business) |

| 2 | Biology 1 (Science) |

| 3 | Business Intro (Business) |

| 4 | Programming 2 (IT) |

| 5 | Biology 2 (Science) |

| 1 | James Peterson | 44 March Way, Glebe 56100 |

| 2 | Sarah Francis | |

| 3 | Shane Cobson | 105 Mist Road, Faulkner 56410 |

| 1 | Computer Science |

| 2 | Dentistry |

| 3 | Economics |

| 4 | Medicine |

How do we link these tables together? We still need to know which subjects a student is taking, which course they are in, and who their teachers are.

Foreign Keys in Tables

We have four separate tables, capturing different pieces of information. We need to capture that students are taking certain courses, have teachers, and subjects. But the data is in different tables.

How can we keep track of this?

We use a concept called a foreign key.

A foreign key is a column in one table that refers to the primary key in another table . Related: The Complete Guide to Database Keys .

It’s used to link one record to another based on its unique identifier, without having to store the additional information about the linked record.

Here are our two tables so far:

Student ( student ID , student name, fees paid, date of birth, address) Subject ( subject ID , subject name) Teacher ( teacher ID , teacher name, teacher address) Course ( course ID , course name)

To link the two tables using a foreign key, we need to put the primary key (the underlined column) from one table into the other table.

Let’s start with a simple one: students taking courses. For our example scenario, a student can only be enrolled in one course at a time, and a course can have many students.

We need to either:

- Add the course ID from the course table into the student table

- Add the student ID from the student table into the course table

But which one is it?

In this situation, I ask myself a question to work out which way it goes:

Does a table1 have many table2s, or does a table2 have many table1s?

If it’s the first, then table1 ID goes into table 2, and if it’s the second then table2 ID goes into table1.

So, if we substitute table1 and table2 for course and student:

Does a course have many students, or does a student have many courses?

Based on our rules, the first statement is true: a course has many students.

This means that the course ID goes into the student table.

Student ( student ID , course ID , student name, fees paid, date of birth, address) Subject ( subject ID , subject name) Teacher ( teacher ID , teacher name, teacher address) Course ( course ID , course name)

I’ve italicised it to indicate it is a foreign key – a value that links to a primary key in another table.

When we actually populate our tables, instead of having the course name in the student table, the course ID goes in the student table. The course name can then be linked using this ID.

| 1 | 3 | John Smith | 200 | 4 Aug 1991 | 3 Main Street, North Boston 56125 |

| 2 | 1 | Maria Griffin | 500 | 10 Sep 1992 | 16 Leeds Road, South Boston 56128 |

| 3 | 4 | Susan Johnson | 400 | 13 Jan 1991 | 21 Arrow Street, South Boston 56128 |

| 4 | 2 | Matt Long | 850 | 25 Apr 1992 | 14 Milk Lane, South Boston 56128 |

This also means that the course name is stored in one place only, and can be added/removed/updated without impacting other tables.

I’ve created a YouTube video to explain how to identify and diagram one-to-many relationships like this:

We’ve linked the student to the course. Now let’s look at the teacher.

How are teachers related? Depending on the scenario, they could be related in one of a few ways:

- A student can have one teacher that teaches them all subjects

- A subject could have a teacher than teaches it

- A course could have a teacher that teaches all subjects in a course

In our scenario, a teacher is related to a course. We need to relate these two tables using a foreign key.

Does a teacher have many courses, or does a course have many teachers?

In our scenario, the first statement is true. So the teacher ID goes into the course table:

Student ( student ID , course ID , student name, fees paid, date of birth, address) Subject ( subject ID , subject name) Teacher ( teacher ID , teacher name, teacher address) Course ( course ID , teacher ID , course name)

The table data would look like this:

| 1 | 1 | Computer Science |

| 2 | 3 | Dentistry |

| 3 | 1 | Economics |

| 4 | 2 | Medicine |

This allows us to change the teacher’s information without impacting the courses or students.

Student and Subject

So we’ve linked the course, teacher, and student tables together so far.

What about the subject table?

Does a subject have many students, or does a student have many subjects?

The answer is both.

How is that possible?

A student can be enrolled in many subjects at a time, and a subject can have many students in it.

How can we represent that? We could try to put one table’s ID in the other table:

| 1 | 3 | 1, 2 | John Smith | 200 | 4 Aug 1991 | 3 Main Street, North Boston 56125 |

| 2 | 1 | 2, 3, 2004 | Maria Griffin | 500 | 10 Sep 1992 | 16 Leeds Road, South Boston 56128 |

| 3 | 4 | 5 | Susan Johnson | 400 | 13 Jan 1991 | 21 Arrow Street, South Boston 56128 |

| 4 | 2 | Matt Long | 850 | 25 Apr 1992 | 14 Milk Lane, South Boston 56128 |

But if we do this, we’re storing many pieces of information in one column, possibly separated by commas.

This makes it hard to maintain and is very prone to errors.

If we have this kind of relationship, one that goes both ways, it’s called a many to many relationship . It means that many of one record is related to many of the other record.

Many to Many Relationships

A many to many relationship is common in databases. Some examples where it can happen are:

- Students and subjects

- Employees and companies (an employee can have many jobs at different companies, and a company has many employees)

- Actors and movies (an actor is in multiple movies, and a movie has multiple actors)

If we can’t represent this relationship by putting a foreign key in each table, how can we represent it?

We use a joining table.

This is a table that is created purely for storing the relationships between the two tables.

It works like this. Here are our two tables:

Student ( student ID , course ID , student name, fees paid, date of birth, address) Subject ( subject ID , subject name)

And here is our joining table:

Subject_Student ( student ID , subject ID )

It has two columns. Student ID is a foreign key to the student table, and subject ID is a foreign key to the subject table.

Each record in the row would look like this:

| 1 | 1 |

| 1 | 2 |

| 2 | 2 |

| 2 | 3 |

| 2 | 4 |

| 3 | 5 |

Each row represents a relationship between a student and a subject.

Student 1 is linked to subject 1.

Student 1 is linked to subject 2.

Student 2 is linked to subject 2.

This has several advantages:

- It allows us to store many subjects for each student, and many students for each subject.

- It separates the data that describes the records (subject name, student name, address, etc.) from the relationship of the records (linking ID to ID).

- It allows us to add and remove relationships easily.

- It allows us to add more information about the relationship. We could add an enrolment date, for example, to this table, to capture when a student enrolled in a subject.

You might be wondering, how do we see the data if it’s in multiple tables? How can we see the student name and the name of the subjects they are enrolled in?

Well, that’s where the magic of SQL comes in. We use a SELECT query with JOINs to show the data we need. But that’s outside the scope of this article – you can read the articles on my Oracle Database page to find out more about writing SQL.

One final thing I have seen added to these joining tables is a primary key of its own. An ID field that represents the record. This is an optional step – a primary key on a single new column works in a similar way to defining the primary key on the two ID columns. I’ll leave it out in this example.

So, our final table structure looks like this:

Student ( student ID , course ID , student name, fees paid, date of birth, address) Subject ( subject ID , subject name) Subject Enrolment ( student ID , subject ID ) Teacher ( teacher ID , teacher name, teacher address) Course ( course ID , teacher ID , course name)

I’ve called the table Subject Enrolment. I could have left it as the concatenation of both of the related tables (student subject), but I feel it’s better to rename the table to what it actually captures – the fact a student has enrolled in a subject. This is something I recommend in my SQL Best Practices post .

I’ve also underlined both columns in this table, as they represent the primary key. They can also represent a foreign key, which is why they are also italicised.

An ERD of these tables looks like this:

This database structure is in second normal form. We almost have a normalised database.

Now, let’s take a look at third normal form.

What Is Third Normal Form?

Third normal form is the final stage of the most common normalization process. The rule for this is:

- Fulfils the requirements of second normal form

- Has no transitive functional dependency

What does this even mean? What is a transitive functional dependency?

It means that every attribute that is not the primary key must depend on the primary key and the primary key only .

For example:

- Column A determines column B

- Column B determines column C

- Therefore, column A determines C

This means that column A determines column B which determines column C . This is a transitive functional dependency, and it should be removed. Column C should be in a separate table.

We need to check if this is the case for any of our tables.

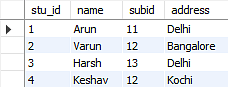

Student ( student ID , course ID , student name, fees paid, date of birth, address)

Do any of the non-primary-key fields depend on something other than the primary key?

No, none of them do. However, if we look at the address, we can see something interesting:

| 3 Main Street, North Boston 56125 |

| 16 Leeds Road, South Boston 56128 |

| 21 Arrow Street, South Boston 56128 |

| 14 Milk Lane, South Boston 56128 |

We can see that there is a relationship between the ZIP code and the city or suburb. This is common with addresses, and you may have noticed this if you have filled out any forms for addresses online recently.

How are they related? The ZIP code, or postal code, determines the city, state, and suburb.

In this case, 56128 is South Boston, and 56125 is North Boston. (I just made this up so this is probably inaccurate data).

This falls into the pattern we mentioned earlier: A determines B which determines C.

Student determines the address ZIP code which determines the suburb.

So, how can we improve this?

We can move the ZIP code to another table, along with everything it identifies, and link to it from the student table.

Our table could look like this:

Student ( student ID , course ID , student name, fees paid, date of birth, street address, address code ID ) Address Code ( address code ID , ZIP code, suburb, city, state)

I’ve created a new table called Address Code, and linked it to the student table. I created a new column for the address code ID, because the ZIP code may refer to more than one suburb. This way we can capture that fact, and it’s in a separate table to make sure it’s only stored in one place.

Let’s take a look at the other tables:

Subject ( subject ID , subject name) Subject Enrolment ( student ID , subject ID )

Both of these tables have no columns that aren’t dependent on the primary key.

Teacher ( teacher ID , teacher name, teacher address)

The teacher table also has the same issue as the student table when we look at the address. We can, and should use the same approach for storing address.

So our table would look like this:

Teacher ( teacher ID , teacher name, street address, address code ID ) Address Code ( address code ID , ZIP code, suburb, city, state)

It uses the same Address Code table as mentioned above. We aren’t creating a new address code table.

Finally, the course table:

Course ( course ID , teacher ID , course name)

This table is OK. The course name is dependent on the course ID.

So, what does our database look like now?

Student ( student ID , course ID , student name, fees paid, date of birth, street address, address code ID ) Address Code ( address code ID , ZIP code, suburb, city, state) Subject ( subject ID , subject name) Subject Enrolment ( student ID , subject ID ) Teacher ( teacher ID , teacher name, street address, address code ID ) Course ( course ID , teacher ID , course name)

So, that’s how third normal form could look if we had this example.

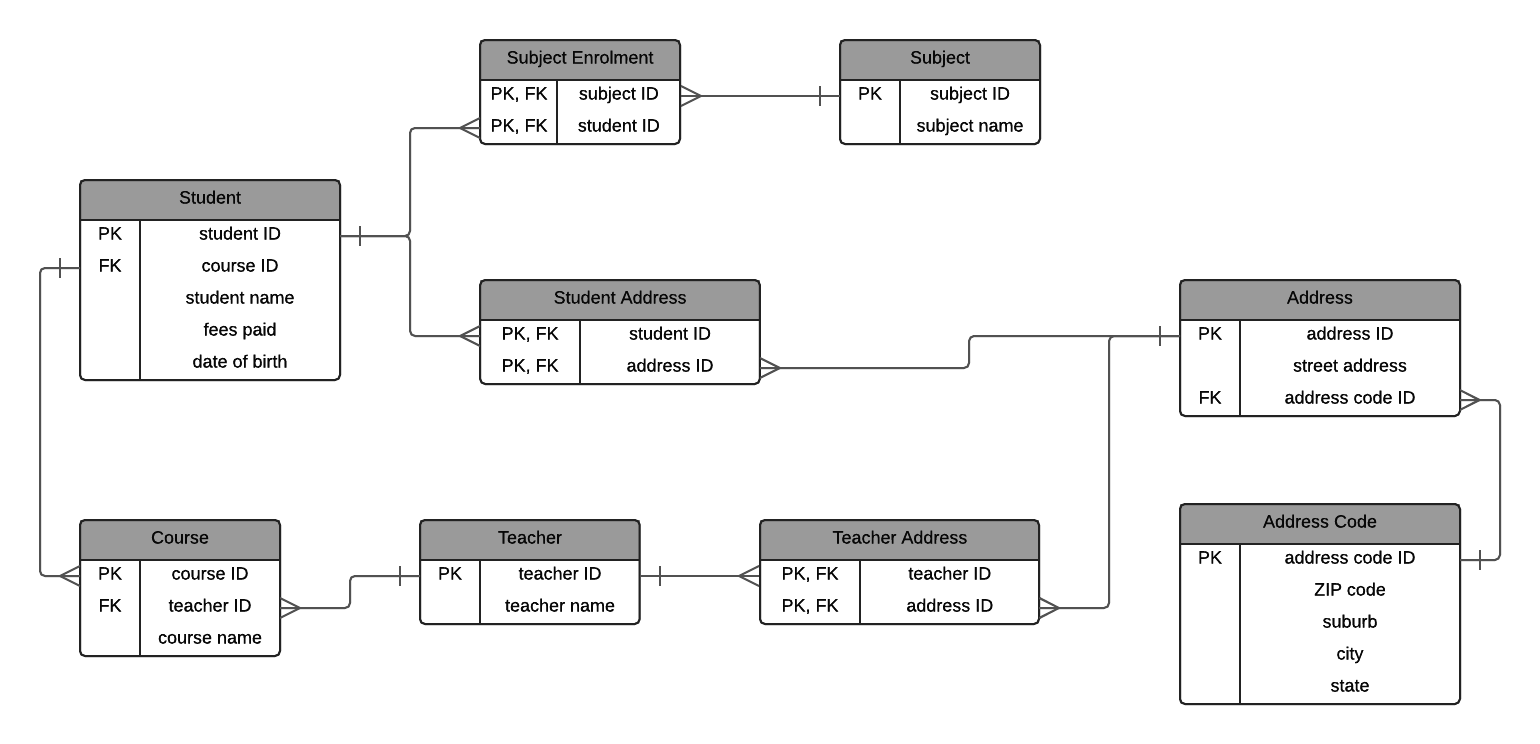

An ERD of third normal form would look like this:

Stopping at Third Normal Form

For most database normalisation exercises, stopping after achieving Third Normal Form is enough .

It satisfies a good relationship rules and will greatly improve your data structure from having no normalisation at all.

There are a couple of steps after third normal form that are optional. I’ll explain them here so you can learn what they are.

Fourth Normal Form and Beyond

Fourth normal form is the next step after third normal form.

What does it mean?

It needs to satisfy two conditions:

- Meet the criteria of third normal form.

- There are no non-trivial multivalued dependencies other than a candidate key.

So, what does this mean?

A multivalued dependency is probably better explained with an example, which I’ll show you shortly. It means that there are other attributes in the table that are not dependent on the primary key, and can be moved to another table.

Our database looks like this:

This meets the third normal form rules.

However, let’s take a look at the address fields: street address and address code.

- Both the student and teacher table have these

- What if a student moves addresses? Do we update the address in this field? If we do, then we lose the old address.

- If an address is updated, is it because they moved? Or is it because there was an error in the old address?

- What if two students have the same street address. Are they actually at the same address? What if we update one and not the other?

- What if a teacher and a student are at the same address?

- What if we want to capture a student or a teacher having multiple addresses (for example, postal and residential)?

There are a lot of “what if” questions here. There is a way we can resolve them and improve the quality of the data.

This is a multivalued dependency.

We can solve this by moving the address to a separate table .

The address can then be linked to the teacher and student tables.

Let’s start with the address table.

Address ( address ID , street address, address code ID )

In this table, we have a primary key of address ID, and we have stored the street address here. The address code table stays the same.

We need to link this to the student and teacher tables. How do we do this?

Do we also want to capture the fact that a student or teacher can have multiple addresses? It may be a good idea to future proof the design. It’s something you would want to confirm in your organisation.

For this example, we will design it so there can be multiple addresses for a single student.

Our tables could look like this:

Student ( student ID , course ID , student name, fees paid, date of birth) Address ( address ID , street address, address code ID ) Address Code ( address code ID , ZIP code, suburb, city, state) Student Address ( address ID, student ID ) Subject ( subject ID , subject name) Subject Enrolment ( student ID , subject ID ) Teacher ( teacher ID , teacher name) Teacher Address ( teacher ID, address ID ) Course ( course ID , teacher ID , course name)

An ERD would look like this:

A few changes have been made here:

- The address code ID has been removed from the Student table, because the relationships between student and address is now captured in the joining table called Student Address.

- The teacher’s address is also captured in the joining table Teacher Address, and the address code ID has been removed from the Teacher table. I couldn’t think of a better name for each of these tables.

- Address still links to address code ID

This design will let you do a few things:

- Store multiple addresses for each student or teacher

- Store additional information about an address relationship to a teach or student, such as an effective date (to track movements over time) or an address type (postal, residential)

- Determine which students or teachers live at the same address with certainty (it’s linked to the same record).

So, that’s how you can achieve fourth normal form on this database.

There are a few enhancements you can make to this design, but it depends on your business rules:

- Combine the student and teacher tables into a person table, as they are both effectively people, but teachers teach a class and students take a class. This table could then link to subjects and define that relationship as “teaches” or “is enrolled in”, to cater for this.

- Relate a course to a subject, so you can see which subjects are in a course

- Split the address into separate fields for unit number, street number, address line 1, address line 2, and so on.

- Split the student name and teacher name into first and last names to help with displaying data and sorting.

These changes could improve the design, but I haven’t detailed them in any of these steps as they aren’t required for fourth normal form.

I hope this explanation has helped you understand what the normal forms are and what normalization in DBMS is. Do you have any questions on this process? Share them in the section below.

Lastly, if you enjoy the information and career advice I’ve been providing, sign up to my newsletter below to stay up-to-date on my articles. You’ll also receive a fantastic bonus. Thanks!

68 thoughts on “Database Normalization: A Step-By-Step-Guide With Examples”

Thanks so much for explaining this concept Ben. To me as a learner, this is the best way to grab this concept.

You broke this down to the last atom. Keep up the good work!

Thanks James, glad you found it helpful!

Absolutely, the best and easiest explanation I have seen. Very helpful.

Saludos Ben, buen post. Podrías por favor revisar la simbología que utilizaste en la relación de las tablas Student y Course, dado que comentaste en las líneas de arriba “significa que la identificación del curso entra en la tabla de estudiantes.” Comenta si la relación sería: Course -< Student

Hi Ronald, Sure, I’ll check this and update the post. (Google Translate: Greetings Ben, good post. Could you please check the symbology you used in the Student and Course table relationship, since you commented on the lines above “it means that the course identification enters the student table.” Comment if the relationship would be: Course – < Student)

Saludos Ben, la simbología de Courses y Student según planteaste es de “1 a n” verifica si sería ” -< " Buen post.

Thanks Ronald! (Google Translate: Greetings Ben, the symbology of Courses and Student as you raised is “1 to n” verifies if it would be “- <" Good post.)

thank you for sharing these things to us , damn i really love it. You guys are really awesome

i dont understand the second normal form linking student id to course id isnt clear

Excellent working example

Glad you found it useful!

This is a nice compilation and a good illustration of how to carry out table normalization. I wish you can provide script samples that represent the ERD you presented here. It will be so much helpful.

Hi DJ, Glad you like the article. Good idea – I’ll create some sample scripts and add them to the post. Thanks, Ben

Good job! This is a great way explaining this topic. You made it look easy to understand. But, one question I have for you is where is a best scenario in real life used the fourth normal form?

Hi Nati, Thanks, I’m glad you like the article. I’m not sure what would be a realistic example of using fourth normal form. I think many 3NF databases can be turned into 4NF, depending on how to want to record values (either a value in a table or an ID to another table). I haven’t used it that often.

Dear Sir’ Can we call the Fourth Normal Form as a BCNF (Boyce Codd Normal Form). Or not?

Hi Subzar, I think Fourth Normal Form is slightly different to BCNF. I haven’t used either method but I know they are a little different. I think 4NF comes after BCNF.

Hey Ben, Your notes are too helpful. Will recommend my other friends for sure. Thanks a lot :)

You’ve done a truly superb job on the majority of this. You’ve used far more details than most people who provide examples do.

Particularly good is the splitting of addresses into a separate table. That is just not done nearly enough.

However, two tables have critical problems: (1) The Student table should not contain Course ID (nor fees paid); there should be a separate Student_Course intersection table. (2) Similarly, the Course table should not have a Teacher ID, likewise a separate Course_Teacher intersection table should be created.

Reasoning (condensed): (1) A student must be able to enroll first, without yet specificying a course. The student’s name and other enrollment data are not related to any specific course. (2) A course has data of its own not related to the teacher: # of credit/lab hours, cost(s), first term offered, last term offered, etc.. Should the only teacher currently teaching a course withdraw from it, the course data should not be lost. Courses have prerequisites, sometimes complex ones, that have nothing to do with who is teaching the course.

Thanks for the feedback Scott and glad you like the post! I understand your reasoning, and yes ideally there would be those two intersection tables. These scenarios are things that we, as developers, would clarify during the design. The example I went with was a simple one where a student must have a course and a course must have a teacher, but in the real world it would cater to your scenarios. Thanks again, Ben

Simple & powerfully conveyed. Thank you.

Glad you found it helpful!

As a grade 11 teacher, I am well aware of the complexities students face when teaching/explaining the concepts within this topic. This article is brilliant and breaks down such a confusing topic quite nicely with examples along the way. My students will definitely benefit from this, thank you so much.

I am just starting out in SQL and this was quite helpful. Thanks.

what a tremendous insights about the normalisation you have explained and its gave me a lot of confedence Thank u some much ben my deepest gratitude for sharing knowledge . you truly proved sharing is caring with regards chandu, india

Came across your material while searching for Normalisation material, as wanting to use my time to improve my Club Membership records, having gained a ‘Database qualification’ some 20 to 30 years years ago, I think, I needed to refresh my memory! Anyway – some queries:

1. Shouldn’t the Student Name be broken down or decomposed into StudentForename and StudentSurname, since doesn’t 1NF require this? 2. Shouldn’t Teacher Name be converted as per 121 above as well?

This would enable records to be retrieved using Surname or Forename

Hi Tim, yes that’s a good point and it would be better to break it into two names for that purpose.

Wow, this is the very first website i finally thoroughly understood normalization. Thanks a lot.

Hello again

I have thought thru the data I need to record, I think, time will tell I suspect. Anyway we run upto 18 competitions, played by teams of 1, 2 3 or 4 members, thus I think that there may be Many to Many relationship between Member and Competition tables, as in Many Member records may be related to Many Competition records [potential for a Pairs or Triples or Fours teams to win all the Competitions they enter], am I correct?

Also should I design the Competition table as CompID, Year, Comp1, Comp 2, Comp3, each Header having the name of the Competition, then I presume a table that links the two, along the lines of:

CompID, Year, MemberID OR MemberID, Year, CompID

Regards, Tim

Hello again, thinking further, I presume that I could create 18 tables, one per Competition to capture the annual results.

Again though, presume my single Comp table (see above) shouldn’t have a column per comp, as this is a repeating group

So do I create a ‘joining table’, that records Year and Comp, another that records Member and Comp and one that records Member and Year.

I may be over thinking it, but as No Comp this year, I think I need to be able to record this, I think

Hi Tim, good question. You could create one table per competition, but you would have 18 tables which have the same structure and different data, which is quite repetitive. I would suggest having a single table for comp, and a column that indicates which competition it is for. It’s OK for a table to have a value that is repeated like this, as it identifies which competition something refers to. Yes you could create joining tables for Year and Comp (if there is a many to many relationship between them) and Member and Comp as well. What’s the relationship between Member and Year?

Thanks for reply, however, would it be easier to say create a Comp table of 18 records, a Comp Type table which has 2 records, that is Annual and One Day, another table for Comp Year, which will record the annual competition results based on:

CompYear – CompTypeID – CompID – MemID

thus each year would create 32 records [10 x 1 + 4 x2 + 2 x3 + 2 x 4], e.g

2019 – 1 – 4 – 1 2019 – 1 – 4 – 2 2019 – 1 – 4 – 3 2019 – 1 – 4 – 4 2019 – 1 – 3 – 1 2019 – 1 – 3 – 2 2019 – 1 – 3 – 3 2019 – 2 – 1 – 1

so members 1 to 4 won the 2019 one day comp Bickford Fours; and members 1 to 3 won the 2019 one day comp Arthur Johnson Triples; and member 1 won the 2019 annual singles championship

Would this work and have I had a light bulb moment?

Hi Tim, yes I think that would work! Storing the comp data separately from the comp type (and so on) will ensure the data is not repeated and is only stored in one place. Good luck!

Ben, Thank you so much! I was only able to grasp the concept of normalization in one hour or so because how you simplified the concepts through a simple running example.

Thanks a lot sir Daniel i have really understood this you are a great teacher

Firstly :Thank you for your website. Secondly: I have still problem with understanding the Second normal form. For example you have written : “student name: Yes, this is dependent on the primary key. A different student ID means a different student name.”. So in your design I am not allowed to have 2 students with same names? What will happen when this, not so uncommon situation occurs?

Glad you like it Wojtek! Yes, in this design you can have two students with the same name. They would have different IDs and different rows in the table, as two people with the same name is common like you mentioned.

This is the best explanation on why and how to normalize Tables… excellent work, maybe the best explanation out there….

Hi, Thanks for the post. That is exactly what I was looking for. But I have a question, how would I insert into student address and teacher address. Best regards

This is amazing, very well explained. Simple example made it easy to understand. Thank you so much!

Thanks sir, this very helpful

Sorry but your example is not in 1NF. 1NF dictates that you cannot have a repeating group. The subjects are a repeating group. Not saying you got the design wrong at the end just that you failed to remove the repeating group in 1NF. When you went to 2NF you made rules for the repeating group multiple times and took care of it but it should have been taken care of in 1NF.

Its so helpful easy to understand

This is great! You make it easy and simple to learn where I can understand.

If you”re going to break address into atoms, (unit, street, city, zip, etc.), would you also break down telephone numbers, (country code, area code, exchange, unit number) into separate fields in another table? How elemental do you go?

Clearly explained :)

Wow c’est vraiment très utile, ça vient d’apporter un plus ma connaissance. Vraiment merci beaucoup pour cet article.

Great post! Just wanted to check – for the ERD diagram in the 2NF example, wouldn’t the relationship between Course and Student be the other way around? A course can have many students, but a student can only be enrolled in one course. So the crows feet symbol should be on the Student table.

Hi Ben, I am hoping you can respond to the question above. I noticed the same and I was hoping to find a correction or explanation in the notes. I second everyone else’s compliments on a great post, too.

From table Student (student ID, course ID, student name, fees paid, date of birth, street address, address code ID) why you put “street address” column remember that “address code ID” is available idenitify address of student i dont understand here

Hi Ben ! ive got a question for you. i didnt understand this part:

So that means in a first normal form duplicate roes are allowed?

I stumbled on this article explaining so succinctly about normalization of database. I must admit that it helped me understand the concept using an example far much better than just theory.

Kudos to you @Ben – You are really a DB star.

Will recommend it to my friends. I am in the process of putting this knowledge into an Employee Management System.

Mmnh ! Thank you sir, it’s very awesome tutor. 👍

The model breaks as soon as you add a 2nd course for a student. CourseID should not be in the student table. You need a separate table that ties studentID and courseID together

That’s a good point. Yes you would need a separate table for that scenario.

The ERDs for the 2nd / 3rd / 4th normal form show the crows foot at the COURSE table, but the STUDENT table receives the FK course_id. The crows foot represents the many end of the relationship, but there is no representation in the course table of any student data point.

Imho, this is where it is broken in addition to what Dan has pointed to.

Loved it – easy explanation. Thank you – love from India

Well done Ben, this is the best normalization tutorial I have ever seen. Thanks so much. keep up the good work.

Thanks for taking the time to create this. This is one of the best breakdowns that I’ve read regarding DB Normalization. However, I can’t really wrap my mind around 4NF as I would like to know how to use it in a real life scenario. Thanks again as this was extremely helpful to me!

Thank you . Very clear explanation

If the statement is true that each student can only have 1 course, then the relationship is not shown correctly in the ERD.

Hi, I don’t quite understand how your example satisfies third normal form. It seems that street address could depend on address ID, which depends upon the primary key. In other words, if address ID were to change, I would assume street address would also have to change. So doesn’t that create transitive dependency?

ii) I also don’t understand why you made two address tables, couldn’t they be combined into one?

Hi ben thanks for superb work.

What I always think that how to determine functional dependency? In this example , After 1NF, we know that course is not functionally dependent on student id because it is day to day example & it make sense.

however While designing DB for client whose domain is unknown to us, how to know effectively the functional dependency from client? What question we should ask as layman?

Thank you very much

This is my last stop to understanding data normalisation after two years of searching and searching. Thousands of tons of thanks Ben.

thanks so much sir have really enjoyed the class and gain alot

Leave a Comment Cancel Reply

Your email address will not be published. Required fields are marked *

This site uses Akismet to reduce spam. Learn how your comment data is processed .

DBMS Normalization: 1NF, 2NF, 3NF Database Example

What is Database Normalization?

Normalization is a database design technique that reduces data redundancy and eliminates undesirable characteristics like Insertion, Update and Deletion Anomalies. Normalization rules divides larger tables into smaller tables and links them using relationships. The purpose of Normalization in SQL is to eliminate redundant (repetitive) data and ensure data is stored logically.

The inventor of the relational model Edgar Codd proposed the theory of normalization of data with the introduction of the First Normal Form, and he continued to extend theory with Second and Third Normal Form. Later he joined Raymond F. Boyce to develop the theory of Boyce-Codd Normal Form.

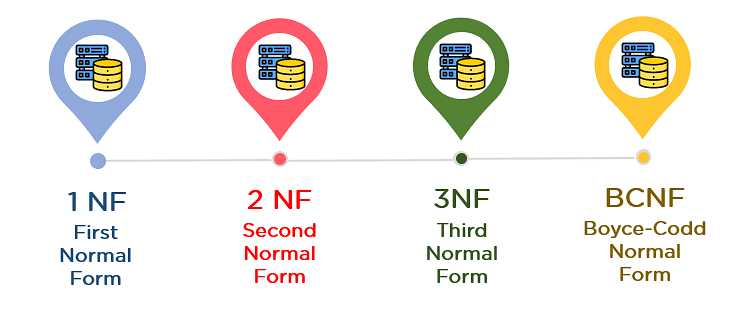

Types of Normal Forms in DBMS

Here is a list of Normal Forms in SQL:

- 1NF (First Normal Form): Ensures that the database table is organized such that each column contains atomic (indivisible) values, and each record is unique. This eliminates repeating groups, thereby structuring data into tables and columns.

- 2NF (Second Normal Form): Builds on 1NF by We need to remove redundant data from a table that is being applied to multiple rows. and placing them in separate tables. It requires all non-key attributes to be fully functional on the primary key.

- 3NF (Third Normal Form): Extends 2NF by ensuring that all non-key attributes are not only fully functional on the primary key but also independent of each other. This eliminates transitive dependency.

- BCNF (Boyce-Codd Normal Form): A refinement of 3NF that addresses anomalies not handled by 3NF. It requires every determinant to be a candidate key, ensuring even stricter adherence to normalization rules.

- 4NF (Fourth Normal Form): Addresses multi-valued dependencies. It ensures that there are no multiple independent multi-valued facts about an entity in a record.

- 5NF (Fifth Normal Form): Also known as “Projection-Join Normal Form” (PJNF), It pertains to the reconstruction of information from smaller, differently arranged data pieces.

- 6NF (Sixth Normal Form): Theoretical and not widely implemented. It deals with temporal data (handling changes over time) by further decomposing tables to eliminate all non-temporal redundancy.

The Theory of Data Normalization in MySQL server is still being developed further. For example, there are discussions even on 6 th Normal Form. However, in most practical applications, normalization achieves its best in 3 rd Normal Form . The evolution of Normalization in SQL theories is illustrated below-

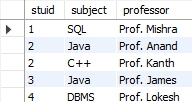

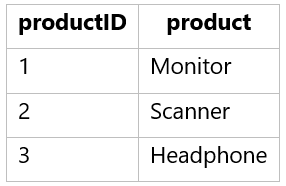

Database Normalization With Examples

Database Normalization Example can be easily understood with the help of a case study. Assume, a video library maintains a database of movies rented out. Without any normalization in database, all information is stored in one table as shown below. Let’s understand Normalization database with normalization example with solution:

Here you see Movies Rented column has multiple values. Now let’s move into 1st Normal Forms:

First Normal Form (1NF)

- Each table cell should contain a single value.

- Each record needs to be unique.

The above table in 1NF-

1NF Example

Before we proceed let’s understand a few things —

What is a KEY in SQL

A KEY in SQL is a value used to identify records in a table uniquely. An SQL KEY is a single column or combination of multiple columns used to uniquely identify rows or tuples in the table. SQL Key is used to identify duplicate information, and it also helps establish a relationship between multiple tables in the database.

Note: Columns in a table that are NOT used to identify a record uniquely are called non-key columns.

What is a Primary Key?

A primary is a single column value used to identify a database record uniquely.

It has following attributes

- A primary key cannot be NULL

- A primary key value must be unique

- The primary key values should rarely be changed

- The primary key must be given a value when a new record is inserted.

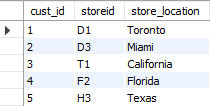

What is Composite Key?

A composite key is a primary key composed of multiple columns used to identify a record uniquely

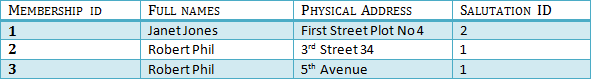

In our database, we have two people with the same name Robert Phil, but they live in different places.

Hence, we require both Full Name and Address to identify a record uniquely. That is a composite key.

Let’s move into second normal form 2NF

Second Normal Form (2NF)

- Rule 1- Be in 1NF

- Rule 2- Single Column Primary Key that does not functionally dependent on any subset of candidate key relation

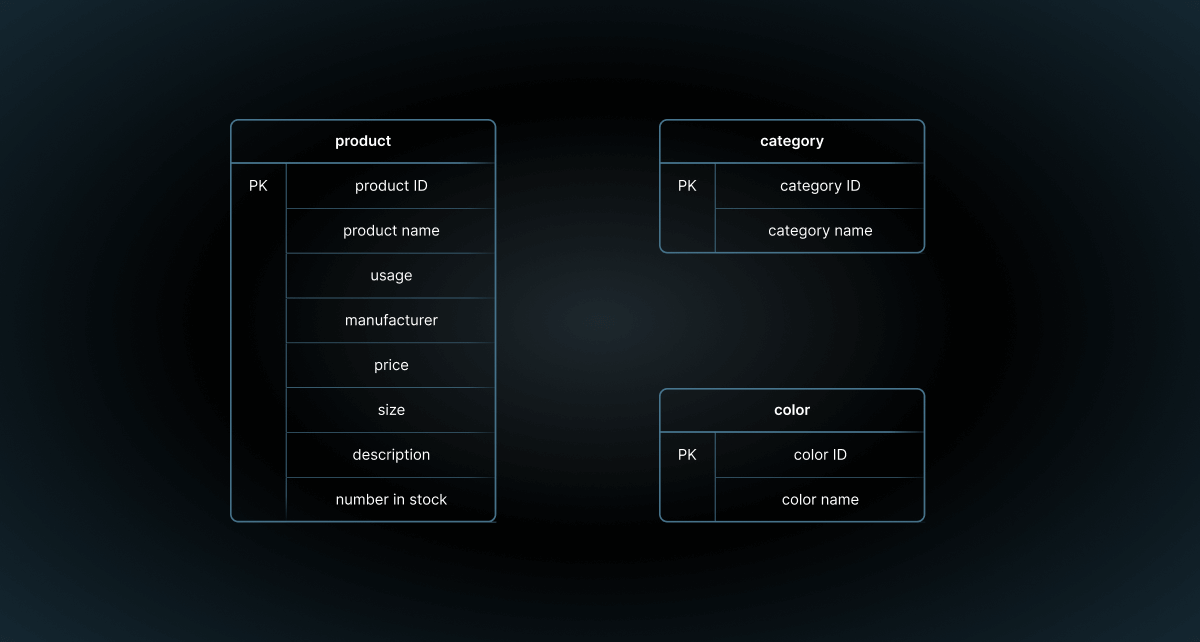

It is clear that we can’t move forward to make our simple database in 2 nd Normalization form unless we partition the table above.

We have divided our 1NF table into two tables viz. Table 1 and Table2. Table 1 contains member information. Table 2 contains information on movies rented.

We have introduced a new column called Membership_id which is the primary key for table 1. Records can be uniquely identified in Table 1 using membership id

Database – Foreign Key

In Table 2, Membership_ID is the Foreign Key

Foreign Key references the primary key of another Table! It helps connect your Tables

- A foreign key can have a different name from its primary key

- It ensures rows in one table have corresponding rows in another

- Unlike the Primary key, they do not have to be unique. Most often they aren’t

- Foreign keys can be null even though primary keys can not

Why do you need a foreign key?

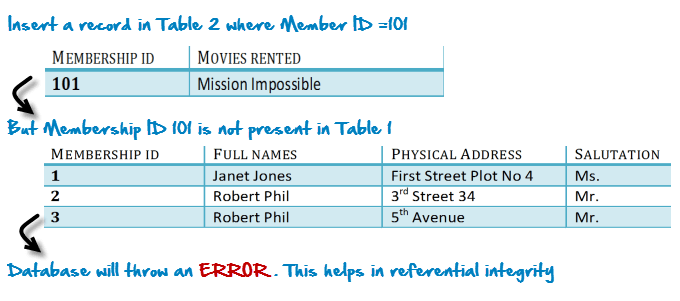

Suppose, a novice inserts a record in Table B such as

You will only be able to insert values into your foreign key that exist in the unique key in the parent table. This helps in referential integrity.

The above problem can be overcome by declaring membership id from Table2 as foreign key of membership id from Table1

Now, if somebody tries to insert a value in the membership id field that does not exist in the parent table, an error will be shown!

What are transitive functional dependencies?

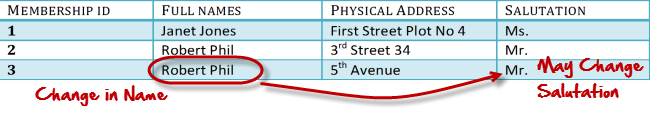

A transitive functional dependency is when changing a non-key column, might cause any of the other non-key columns to change

Consider the table 1. Changing the non-key column Full Name may change Salutation.

Let’s move into 3NF

Third Normal Form (3NF)

- Rule 1- Be in 2NF

- Rule 2- Has no transitive functional dependencies

To move our 2NF table into 3NF, we again need to again divide our table.

3NF Example

Below is a 3NF example in SQL database:

We have again divided our tables and created a new table which stores Salutations.

There are no transitive functional dependencies, and hence our table is in 3NF

In Table 3 Salutation ID is primary key, and in Table 1 Salutation ID is foreign to primary key in Table 3

Now our little example is at a level that cannot further be decomposed to attain higher normal form types of normalization in DBMS. In fact, it is already in higher normalization forms. Separate efforts for moving into next levels of normalizing data are normally needed in complex databases. However, we will be discussing next levels of normalisation in DBMS in brief in the following.

Boyce-Codd Normal Form (BCNF)

Even when a database is in 3 rd Normal Form, still there would be anomalies resulted if it has more than one Candidate Key.

Sometimes is BCNF is also referred as 3.5 Normal Form.

Fourth Normal Form (4NF)

If no database table instance contains two or more, independent and multivalued data describing the relevant entity, then it is in 4 th Normal Form.

Fifth Normal Form (5NF)

A table is in 5 th Normal Form only if it is in 4NF and it cannot be decomposed into any number of smaller tables without loss of data.

Sixth Normal Form (6NF) Proposed

6 th Normal Form is not standardized, yet however, it is being discussed by database experts for some time. Hopefully, we would have a clear & standardized definition for 6 th Normal Form in the near future…

Advantages of Normal Form

- Improve Data Consistency: Normalization ensures that each piece of data is stored in only one place, reducing the chances of inconsistent data. When data is updated, it only needs to be updated in one place, ensuring consistency.

- Reduce Data Redundancy: Normalization helps eliminate duplicate data by dividing it into multiple, related tables. This can save storage space and also make the database more efficient.

- Improve Query Performance: Normalized databases are often easier to query. Because data is organized logically, queries can be optimized to run faster.

- Make Data More Meaningful: Normalization involves grouping data in a way that makes sense and is intuitive. This can make the database easier to understand and use, especially for people who didn’t design the database.

- Reduce the Chances of Anomalies: Anomalies are problems that can occur when adding, updating, or deleting data. Normalization can reduce the chances of these anomalies by ensuring that data is logically organized.

Disadvantages of Normalization

- Increased Complexity: Normalization can lead to complex relations. An extensive number of tables with foreign keys can be difficult to manage, leading to confusion.

- Reduced Flexibility: Due to the strict rules of normalization, there might be less flexibility in storing data that doesn’t adhere to these rules.

- Increased Storage Requirements: While normalization reduces redundancy, It may be necessary to allocate more storage space to accommodate the additional tables and indices.

- Performance Overhead: Joining multiple tables can be costly in terms of performance. The more normalized the data, the more joins are needed, which can slow down data retrieval times.

- Loss of Data Context: Normalization breaks down data into separate tables, which can lead to a loss of business context. Examining related tables is necessary to understand the context of a piece of data.

- Need for Expert Knowledge: Implementing a normalized database requires a deep understanding of the data, the relationships between data, and the normalization rules. This requires expert knowledge and can be time-consuming.

That’s all to SQL Normalization!!!

- Database designing is critical to the successful implementation of a database management system that meets the data requirements of an enterprise system.

- Normalization in DBMS is a process which helps produce database systems that are cost-effective and have better security models.

- Functional dependencies are a very important component of the normalize data process

- Most database systems are normalized database up to the third normal forms in DBMS.

- A primary key uniquely identifies are record in a Table and cannot be null

- A foreign key helps connect table and references a primary key

- Database Design in DBMS Tutorial: Learn Data Modeling

- MySQL Workbench Tutorial: What is, How to Install & Use

- What is a Database? Definition, Meaning, Types with Example

- MySQL SELECT Statement with Examples

- SQL Tutorial for Beginners: Learn SQL in 7 Days

- MySQL Tutorial for Beginners: Learn MySQL Basics in 7 Days

- 17 Best SQL Tools & Software (2024)

- MariaDB vs MySQL – Difference Between Them

Normalization in SQL DBMS: 1NF, 2NF, 3NF, and BCNF Examples

Introduction

If you've started learning about databases, you may have come across the term "normalization" or "database normalization".

In this article, you'll learn what normalization is, what the benefits are, and how to perform normalization in SQL using some database normalization examples.

What is Normalization in SQL?

Normalization, also known as database normalization or normalization in SQL, is a process to improve a design of a database to meet specific rules.

When you create database tables and add columns, you're able to create them in almost any way and configuration that you like.

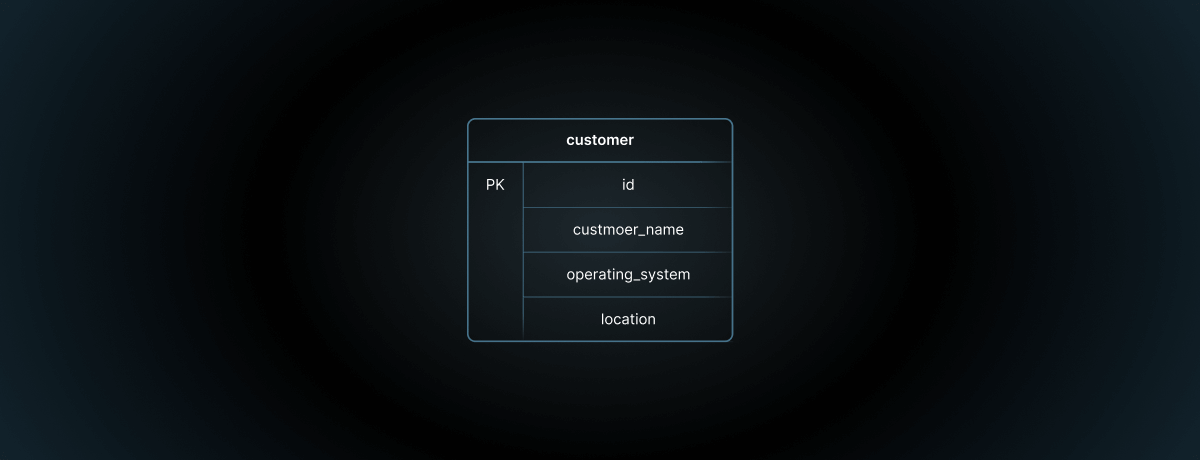

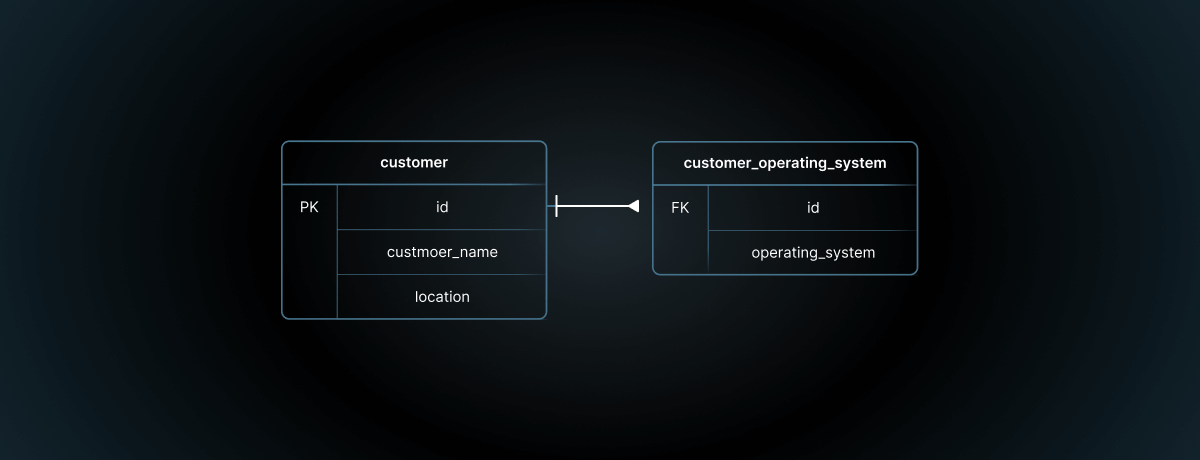

However, to use the benefits of a database and to have an effective design, it helps to follow a certain set of rules. The process of applying these rules is called normalization, and it has several benefits.

The main benefit of normalization is that a piece of information is stored in a single place.

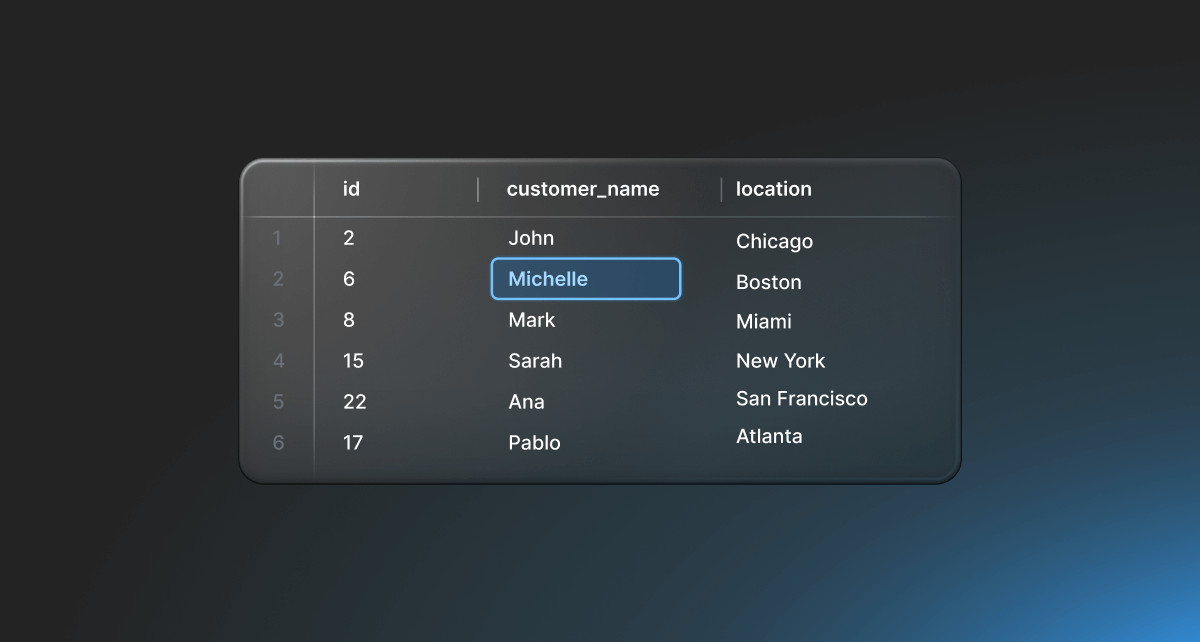

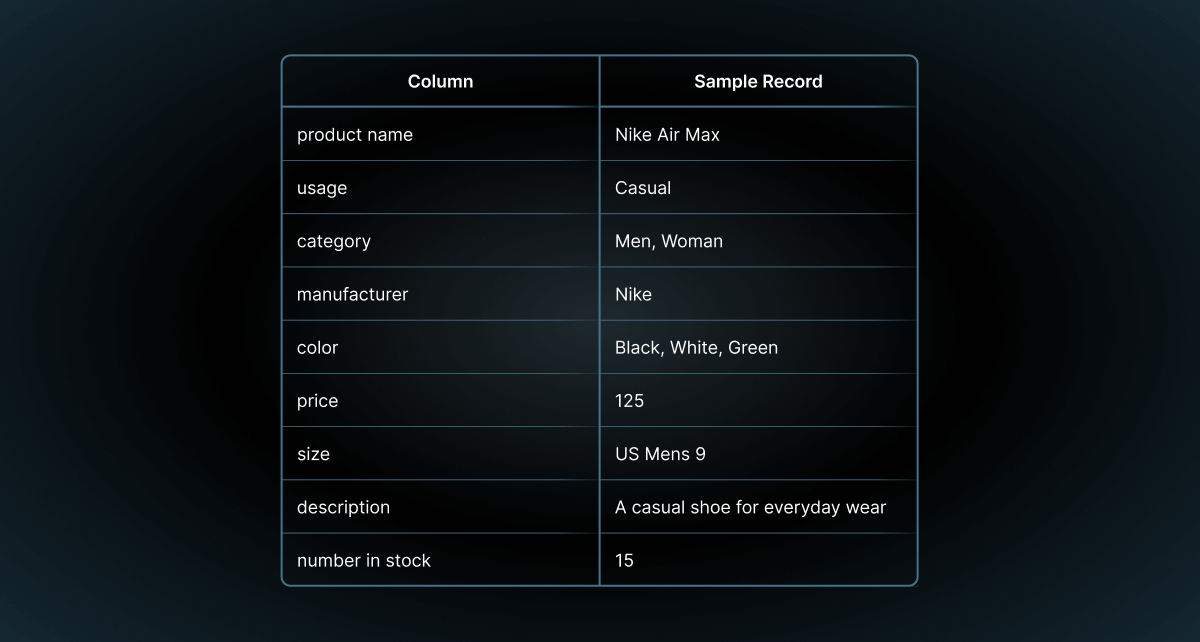

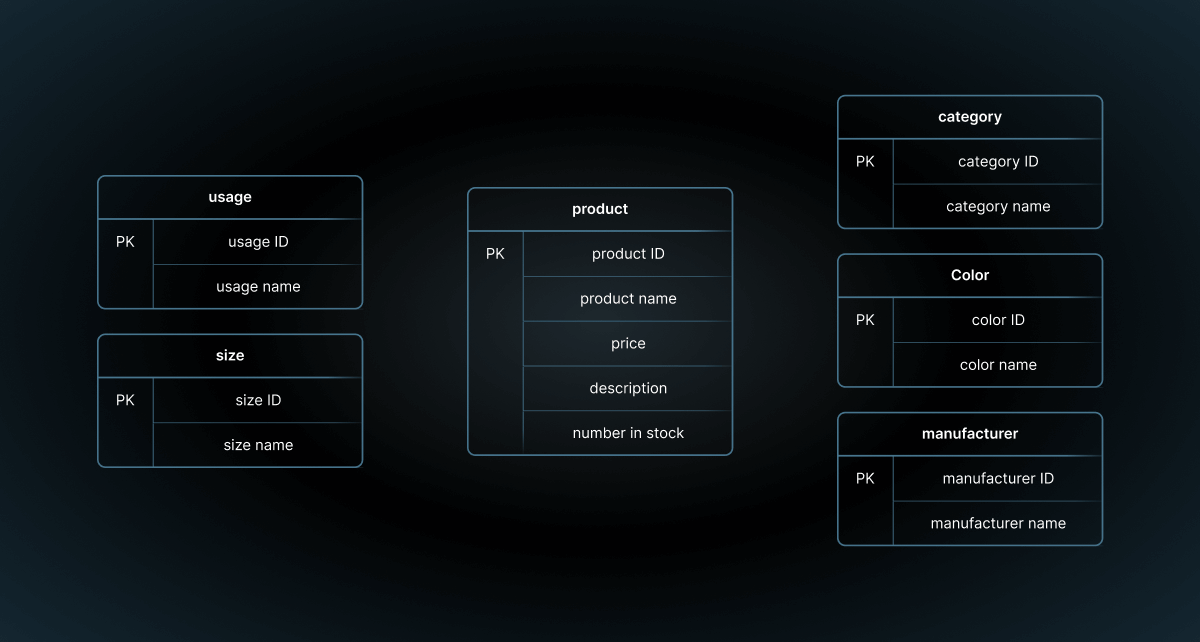

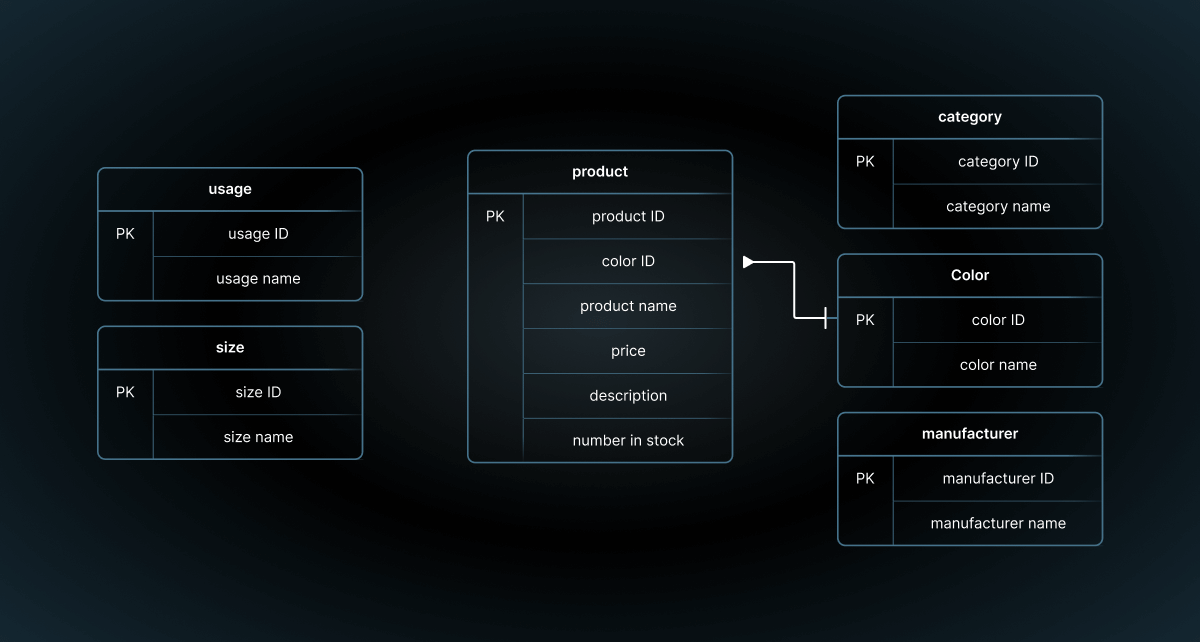

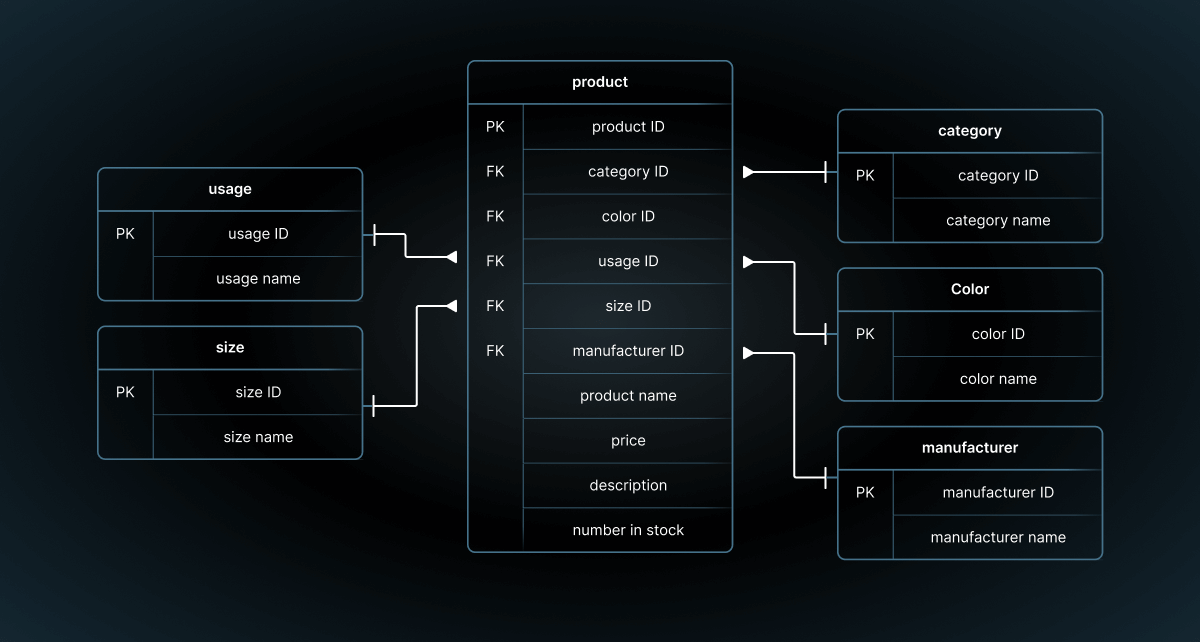

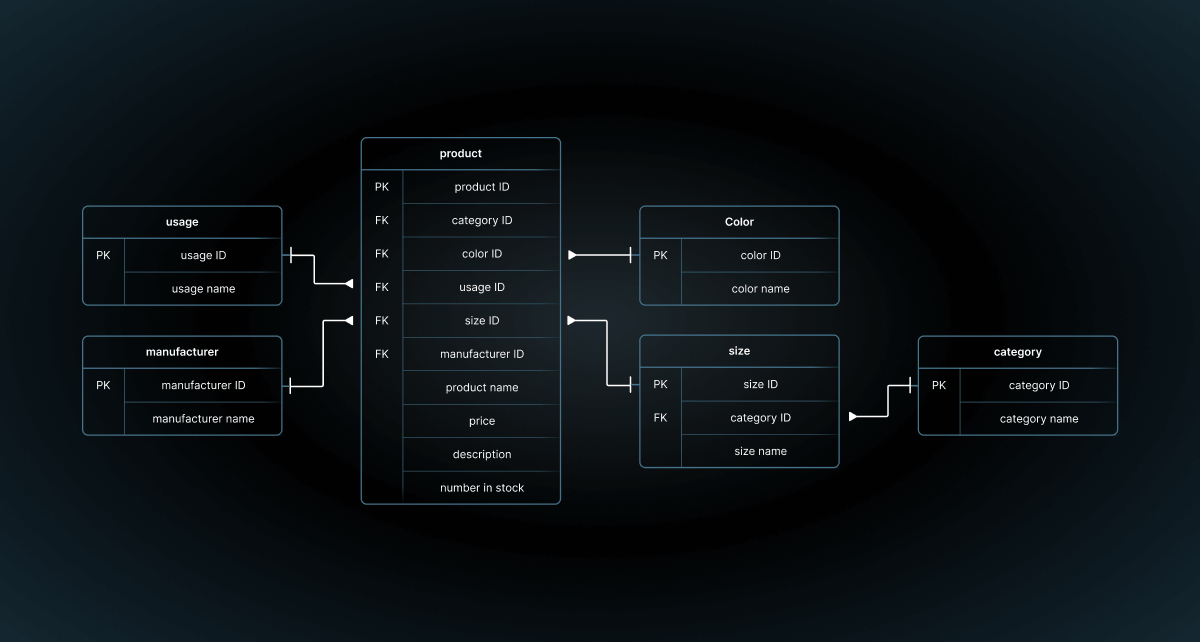

Let's look at an example. Let's say we're designing a database for an eCommerce store that sells shoes.

One of the main items you'll store information about is the name of a product, such as a shoe called "Nike Air Max". On the website for this eCommerce store, you may need to show this in several places:

- A list of products to browse

- A person's shopping cart, which is a list of products they have added to a cart for purchasing